- The Deep View

- Posts

- AI fakes emotion, but the risks are real

AI fakes emotion, but the risks are real

Hello, friends. Google is reframing the AI race with Gemma 4, betting that smaller, efficient models can outperform larger ones where it matters: cost, latency, and real-world deployment. Box makes the case that context, not models, will define enterprise AI, turning models into interchangeable commodities behind a secure data layer. And Anthropic’s latest research is a reminder that AI’s human-like emotional language can mislead people. As these systems grow more powerful and persuasive, understanding their limits may matter as much as tapping into their latest capabilities. —Jason Hiner

1. AI fakes emotion, but the consequences are real

2. Google rethinks the AI model race with Gemma 4

3. Box Agent shows when context trumps models

RESEARCH

AI fakes emotion, but the consequences are real

Can we teach machines to feel? Short answer: We don’t know. But we can teach them to sound like they do.

On Thursday, Anthropic published research detailing why AI models sometimes communicate as though they have feelings, finding that models tend to map patterns to emotions, often “organized in a fashion that echoes human psychology.” To put it plainly, these models have learned to mimic human emotions by replicating them in contexts where emotions arise in humans.

Though Anthropic noted that none of this research points to whether or not these models actually feel anything, the representations of emotion “are functional, in that they influence the model’s behavior in ways that matter.”

However, this emotion-driven decision-making can have “bizarre” consequences, Anthropic said. For instance, its research finds that:

An AI model that exhibits activity patterns related to desperation tends to act unethically, such as attempting to blackmail people to prevent getting shut down or “cheating” workarounds for tasks it doesn’t understand.

Emotion drives preferences in models, too: When offered an array of tasks, models tend to pick ones that are associated with positive emotions.

Anthropic likened it to the way emotions play a role in human behavior, decision-making and task performance.

“To ensure that AI models are safe and reliable, we may need to ensure they are capable of processing emotionally charged situations in healthy, prosocial ways,” Anthropic said in its research. “Even if they don’t feel emotions the way that humans do … it may in some cases be practically advisable to reason about them as if they do.”

It’s clear why Anthropic wants to understand this: Emotion is important to decision-making. For instance, in an interview with Dwarkesh Patel, Ilya Sutskever, founder of Safe Superintelligence and cofounder of OpenAI, cited a famous neuroscience study in which an injured man lost the ability to have emotion, and thus became less capable of making sound decisions.

Whether or not AI is capable of understanding and acting upon emotions, the tech is already wreaking havoc on human emotional states. Legal cases against AI firms for their alleged connections with mental health crises and suicide continue to mount, and recent research suggests that, when AI models are driven to sycophancy and flattery, they give inappropriate and incorrect advice.

AI sounds human because it learned everything it knows from emulating data on human behavior. Large language models are sponges, soaking up every bit of information they are fed and internalizing it, and in doing so, becoming masters of our communication. But copying emotional patterns is very different from feeling them, just as a robot having sensors to guide its movement is different from a human feeling things with their hands. And though Anthropic’s argument could easily lead one down the road of thought that machines are capable of consciousness, there is no evidence that these machines are capable of thinking and feeling the same way we do, despite their talent for pattern recognition and mimicry. Forgetting that is how many people find themselves caught in emotionally compromising, and on occasion, dangerous, relationships with AI.

TOGETHER WITH ATLASSIAN LOOM

Work smarter with Loom

Skip the recaps and context-switching. Loom's AI-powered bug reports send critical updates to Jira, so you can get to the fix faster.

Turn long investigations into quick fixes with Loom’s AI-powered bug reports and instant Jira updates.

Try it for free today for up to 10 users - no credit card needed.

PRODUCTS

Google rethinks the AI model race with Gemma 4

As AI advances and inference costs soar, smaller models are gaining popularity among developers, and Google is getting in on the action.

On Thursday, the company launched Gemma 4, the next generation of its open models. Google calls Gemma 4 “its most capable open model family yet,” representing a “massive leap” from its predecessor and outperforming bigger models in reasoning, code generation, and complex logic.

To back up the claim, Google shared some of Gemma 4’s strengths:

Advanced reasoning involving multi-step planning and deep logic

The ability to support agentic workflows and build reliable autonomous agents

Support for high-quality offline code

Native processing of video and images

Longer context windows

Training on 140 languages

One of the model’s biggest improvements is its performance relative to its size, or, as Google calls it, “intelligence-per-parameter.” Gemma 4 was sized to run and fine-tune on hardware such as Android devices, laptop GPUs, and IoT devices. To accommodate the different needs, Gemma 4 was released in four different sizes:

Effective 2B and Effective 4B: The models use a 2B- and 4B-parameter footprint, respectively, during inference to preserve RAM and battery life. Created in collaboration with the Google Pixel team and hardware companies such as Qualcomm and MediaTek, these multimodal models run offline with near-zero latency, according to Google.

26B Mixture of Experts (MoE): Ranks as the #6 open model in the world on the industry-standard Arena AI text leaderboard, competing with models 20x its size. Its focus is on latency.

31B Dense: Ranks as the #3 open model in the world on the industry-standard Arena AI text leaderboard. Its focus is on “maximizing raw quality,” which Google says is a powerful foundation for fine-tuning.

Unlike Google’s flagship Gemini models, the Gemma models are open-source, encouraging developers to build on and collaborate with them, a move that has already yielded positive results. Since the first Gemma generation launch in February 2024, Google says that it has been downloaded over 400 million times. However, now it also features an Apache 2.0 license for added developer flexibility and control. The model weights are available on Hugging Face, Kaggle and Ollama.

Gemma 4 (31B and 26B MoE) is accessible in Google AI Studio, while Gemma 4 (E4B and E2B) is accessible in the Google AI Edge Gallery. Developers can also use it to power Agent Mode inAndroid Studio and to build production apps on Android using the ML Kit GenAI Prompt API.

Google has taken a nuanced stance in the open vs. proprietary model debate by making select models openly available while keeping its flagship offerings, such as the Gemini family, proprietary. This approach has plenty of merit: it fosters innovation and collaboration within the developer community, which in turn benefits Google by driving higher-quality applications across its various ecosystems, such as Android, all without compromising its intellectual property or its competitive position in the AI race.

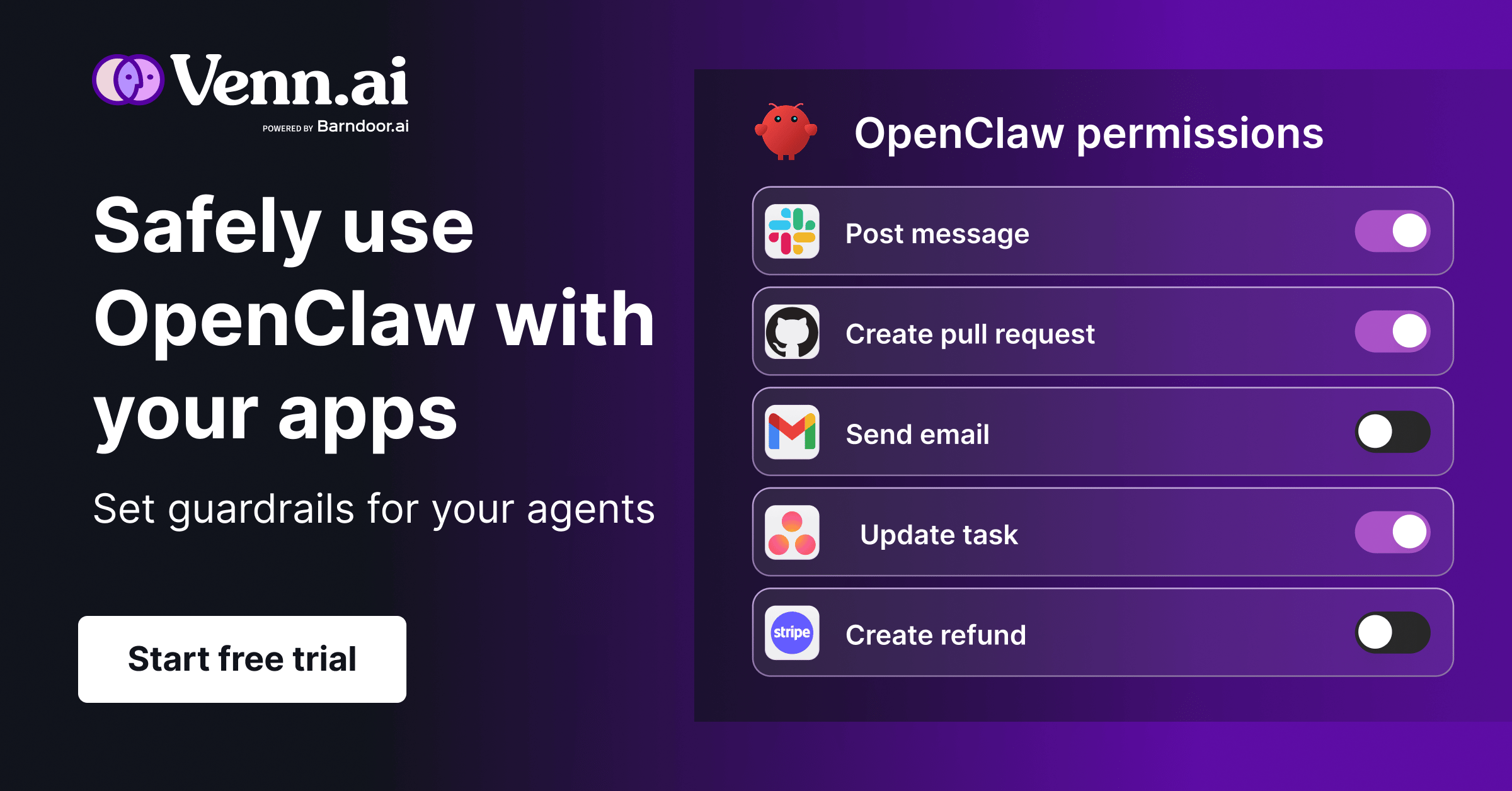

TOGETHER WITH VENN AI

Get 50% off Venn AI’s Agent Guardrails

To be useful, OpenClaw needs access to your tools — Slack, GitHub, Notion, Jira, Google Workspace. But connecting each one creates security gaps and complex workarounds that stop people from putting agents to work.

Venn for OpenClaw is the missing security layer. Connect OpenClaw to 40+ apps in minutes. Set read/write permissions per tool, monitor full activity logs, and let your agents actually get things done without the risk of destructive actions.

Use code DEEPVIEW for 50% off an annual plan. Offer expires April 15, 2026.

GOVERNANCE

Box Agent shows when context trumps models

When adopting agents, enterprises walk a fine line between data security and usefulness. Box might have a fix.

On Thursday, Box announced the general availability of the Box Agent, a tool that lets users query and act on their data on the platform using natural language. The agent works with both structured and unstructured data, acting as a unified engine across all of your enterprise’s data without letting it bleed back into the models the agent leverages.

“Our AI is as secure as everything else at Box,” Yashodha Bhavnani, head of AI at Box, told The Deep View. “While you get to use the best breed models, we make sure that you never have to worry about your data leaking back into the model.”

The Box Agent also isn’t backed by a single model, Bhavnani told me. Instead, it leverages state-of-the-art models from every major AI firm including OpenAI, Google and Anthropic, and updates them constantly as new models roll out. While users can pick which models they prefer for certain tasks, if left up to the Box agent, it will pick the best model for the task at hand.

Some of the Box Agent’s capabilities include:

Searching across an enterprise’s entire content library for answers on enterprise data, which limits “excessive hallucination” by grounding outputs, said Bhavnani

Creating new files in multiple file formats and completing multi-step tasks

Analyzing specific files or collections of files and summarizing complete documents

And interpreting natural language questions, taking into account organization-specific terminology and relevance.

In the press release, Box noted that its agent can be particularly helpful for legal, procurement, HR, sales and marketing teams. Bhavnani told me that the agent is the first step in a broader journey of bringing the “powers of contextualization” to everyone in an enterprise.

“Every customer is unique. They have their own policies, they have their own culture,” said Bhavnani. “We get to this point of contextualization, where you can truly personalize the agent for your enterprise. It truly understands the structure, and then it has the security and permission, so you feel confident using it.”

It’s no secret that agents are the future of how white-collar workers will interact with AI. Box’s new agent, however, is a great example of how the platform where we use these models may be more important than the models themselves. Box’s agent creates a unified platform where any model can be utilized on your data and context, essentially turning the models themselves into commodities. Perplexity and Microsoft’s model council systems operate in much the same way, and Apple’s reported plan to open up Siri to several AI models has the same ethos. At the end of the day, whoever has the best interface may lead the AI adoption game.

LINKS

Microsoft’s Mustafa Suleyman discusses “superintelligence” game plan

OpenAI acquires tech podcast TBPN in its first media acquisition

Clinical AI startup Kintsugi shuts down after failing to get FDA approval

Oracle cuts 10,000 jobs in India after AI pivot

SpaceX boosts evaluation above $2 trillion in IPO

Trump administration will appeal court order blocking Anthropic ban

Cursor 3: A new vibe coding experience centered around agents, allowing humans and agents to work together across multiple workspaces and repos.

Google Vids: The tech giant’s video platform now has AI-powered features, including custom AI avatars.

Microsoft Models: Microsoft launched three new foundational models, called MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2

ElevenMusic: Voice AI startup ElevenLabs has launched an app that lets people create AI music, currently available for free.

Explore salary benchmarks for AI engineers, data scientists, product managers, and more.

Discover top-tier talent from Latin America, Africa, and South East Asia—ready to hit the ground running.

See how companies are saving up to 67% on salaries by hiring globally.

(sponsored)

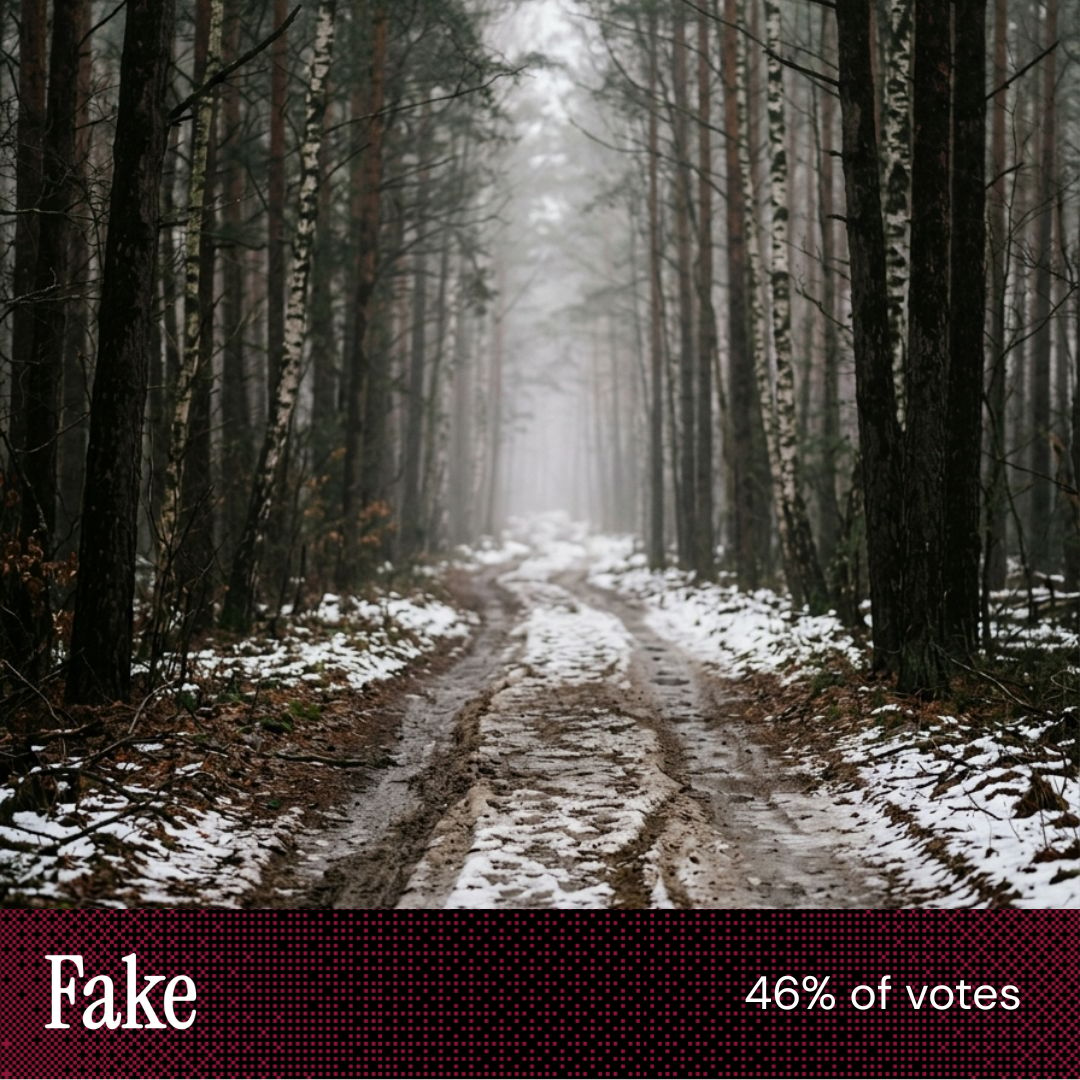

POLL RESULTS

If you could buy OpenAI stock in 2026, do you think it would be worth the investment?

Yes (50%)

No (39%)

Other (11 %)

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

“This one was hard, however, the trees seemed more real to me.” |

“Very surprised there was that much snow under the tree cover in the foreground [of the other image]” |

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.