- The Deep View

- Posts

- AI mishap deletes millions of Amazon orders

AI mishap deletes millions of Amazon orders

Welcome back. Anthropic’s newest Claude feature points to one of AI’s most promising uses: helping people understand complex ideas by generating drawings, charts, and other visuals directly inside chat. Microsoft is pushing further into the medical field with Copilot Health, a siloed AI assistant that can connect wearables, health records, and lab results to match you with cited health advice, with strong privacy controls. Meanwhile, Amazon is learning the hard way that AI coding still needs adult supervision, after outages tied to AI-generated code reportedly cost millions in lost orders and triggered new guardrails. AI keeps getting more powerful, but human judgment still matters across the most important parts of the tech stack. —Jason Hiner

1. AI coding bug costs Amazon millions of lost orders

2. Claude brings visual explanations to AI chat

3. Microsoft unleashes AI to fix personal health

BIG TECH

AI mishap deletes millions of Amazon orders

AI coding has emerged as one of the most lucrative and productive use cases for the tech among enterprises. But businesses may be jumping the gun.

Executives at companies like Spotify and Canva have admitted that their developers hardly write code anymore, leading many to shift their responsibilities to orchestration, rather than development. And while the jury is still out on whether AI can entirely automate this work, enterprises are using their AI shifts to justify substantial layoffs.

The numbers are starting to pile up, with companies like Atlassian, Oracle, Block and Amazon slashing thousands, leaving large swaths of software engineers without work.

Still, even though enterprises seem ready for AI to automate their coders, AI might not be ready for the big leagues just yet. It’s a lesson that Amazon seems to be learning in real time: After a string of outages connected to AI-generated code cost the company millions of lost orders, the e-commerce giant has ordered a 90-day safety guardrail on code written for “tier-1 systems,” or those that directly face consumers, according to Business Insider.

Outages linked to the use of AI coding tools, including the company’s own assistant Q, have emerged since the third quarter of last year, Business Insider reported. The latest outage occurred in early March, taking out the company’s website and shopping app for several hours.

As a result, developers are going to be on a tighter leash:

Amazon’s developers are now required to document changes more stringently and get additional approvals from two other people on staff before making changes to code.

The company is also developing safeguards that combine agentic and deterministic, or rules-based, guardrails.

If there’s one thing we know to be true about AI, it’s that these models are not infallible. Though these tools might be tempting for the speed that they unlock, speed can often be the enemy of quality, changing the “risk profile” that enterprises have to consider, Satyam Dhar, staff software engineer at Galileo and former Amazon and Adobe engineer, told The Deep View. AI tools can create a lot of code that looks like it’s ready for production before it’s actually been pressure tested, he said. “That’s where things get dangerous.”

“The discipline doesn’t change,” said Dhar. “AI helps with scaffolding, but the engineering judgment still has to come from humans.”

Though Amazon’s slip-ups may serve as a lesson for other enterprises to take it slow, the momentum around coding assistants may make it difficult to heed. Along with automating coding itself, major AI firms like OpenAI and Anthropic are now throwing AI at code review and security processes. Enterprises, faced with both the temptation to supercharge productivity and the pressure to keep up with competitors, may not bear in mind the risks that they’re taking on by reaching for the shiny new toy.

TOGETHER WITH ANYSCALE

Scaling LLM Fine-Tuning with FSDP, DeepSpeed, and Ray

Hitting memory limits when fine-tuning LLMs is a sign you’re ready to scale. In this technical webinar, we’ll walk through how to fine-tune large language models across distributed GPU clusters using FSDP, DeepSpeed, and Ray.

We will dive into the real systems problems: orchestration, memory pressure, and failure recovery. You’ll see how Ray - the open source compute framework used by companies like Cursor, xAI, and Apple - integrates with PyTorch. We’ll cover how to launch and manage distributed training jobs, configure ZeRO stages and mixed precision for better memory efficiency, and handle checkpointing.

Seats are limited to keep the session interactive.

PRODUCTS

Claude brings visual explanations to AI chat

This new Claude update is a visual learner’s dream.

On Thursday, Anthropic updated Claude to enable it to generate visuals in real time, including custom charts, diagrams, and other visualizations in-line within its responses. They are meant to function as interactive drawings to help you understand a topic at hand. It's as if you see “Claude drawing on a whiteboard,” the same way a teacher would to explain something.

The drawings are also temporary and can change or disappear as a conversation evolves. For example, in one of the demo videos, a user asks, “Explain how to make a cool paper airplane.” In response, Claude created a visual with step-by-step directions for folding the paper, which might have otherwise been more difficult to understand without text.

In another example, a user asks about the periodic table, and Claude builds an interactive one that users can click around to learn new details. Claude will decide the best time to create a visual, but users can also prompt it to do so. Once it creates something, you can ask it to tweak it or go in deeper. The feature will be on by default for all plan types, including free plans.

This feature is similar to the one Google released with Gemini 3’s November launch, which allowed users to create interactive tools and simulations to better understand content. That launch was well-received, and this one will likely be too, given its practical application.

When AI first became popular, one of the biggest concerns people had was how easy access to cheating would atrophy the education process. However, since then, there has been an increased understanding of the value AI tools can bring to educational settings, largely enabled by the release of new tools that provide greater clarity and context. Beyond clear-cut features, such as ChatGPT study mode or Claude Learning mode, it is smaller, incremental features like this one that truly highlight the possibilities for using AI to enhance education. Whether you are intentionally seeking to strengthen understanding or just need a quick answer, the visual cues encourage deeper learning.

TOGETHER WITH RESOLVE

Less pain, more gain: How AI for prod keeps AI code running

Code from Claude is about to hit prod, but it doesn’t have to be painful.

Engineering teams at Coinbase, DoorDash, and Zscaler use Resolve AI to triage alerts, resolve incidents, and debug production using AI that works across code, infra, and telemetry.

The results mean 70% faster MTTR, 30% fewer engineers pulled in per incident, and thousands of saved engineering hours.

Learn how Coinbase evaluated AI for prod or download the free AI for Prod evaluation guide.

PRODUCTS

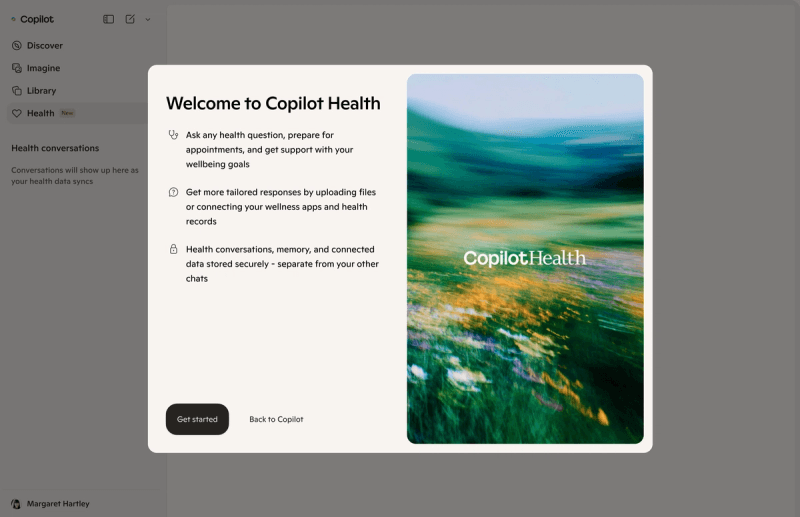

Microsoft unleashes AI to fix personal health

What if your AI knew everything your doctor does? As tech companies race to work on that idea, Microsoft has become the latest to throw its hat in the ring.

On Thursday, Microsoft launched Copilot Health, a separate space within Copilot that acts as a hub for all of your AI-powered medical assistance needs. It can use all of your health data, including from wearables, US hospital health records, provider organizations, and even lab test results, to provide insights and answer questions.

“We are really on the cusp of building a true medical superintelligence, one that can learn everything about you, all of your health conditions from your wearable data, your electronic health records, and use that to provide support and insights and intelligence at your fingertips,” said Mustafa Suleyman, Microsoft AI CEO, in a press briefing.

While Microsoft Copilot already answers 50 million consumer health questions daily, with this experience, Microsoft aimed to improve the quality of responses with:

Information from credible health organizations across 50 countries

Clear citations to source materials

Expert-written cards from Harvard Health

Assistance with connecting to doctors who accept insurance

To quell concerns about reliability and security, Microsoft shared that Copilot Health was developed with an internal clinical team and informed by an external panel of over 230 physicians and that data is protected with “industry-leading safeguards.”

A standout to me was that you can only access Copilot Health in its siloed space, with your health queries never mixed into your regular Copilot experience, and that it prioritizes user consent when accessing health data. It is being rolled out to users via a waitlist to ensure maximum precaution.

This product is a direct response to similar offerings from competitors such as OpenAI and Anthropic, and it appears to be a good strategy, given demand. OpenAI has previously said 40 million people worldwide rely on ChatGPT daily for medical advice, a number comparable to the 50 million daily queries Microsoft touted. Amazon announced this week that its Health AI solution, which originally launched only in One Medical, had such strong demand that the company expanded access on the Amazon website and app. If people are organically turning to AI to help navigate health queries, then it is only logical to allow them to connect their personal data and get much better and more accurate results.

LINKS

Adobe said CEO Shantanu Narayen to step down once successor is named

Microsoft, Meta commit $50 billion to data center leases in recent quarter

Robotics firm Sunday raises $165 million at $1.15 billion valuation

India plans $11 billion fund to bolster domestic chips

How Amazon pushes employees to use more AI in their daily work

xAI hires two senior leaders from Cursor to boost AI coding

Google Maps: Google gave Maps a Gemini facelift, with a new Ask Maps conversational experience, 3D view, and more.

Claude: It can now generate custom charts, diagrams and visualizations in-line responses.

Perplexity Computer: The agentic platform is now available to Pro subscribers, while Max subscribers receive monthly credits and higher spending limits.

Flow by Google: The AI creative studio was updated to make assets easier to implement, allowing users to use “@” to reference specific assets in their prompts.

AI Trainer & Evaluator: RLHF, preference data annotation and post-training model alignment

AI Safety Researcher: Robustness testing, alignment evaluation and safety benchmark development

Prompt Engineer: Systematic prompt design, evaluation frameworks and LLM behavior optimization

Computer Vision Engineer: Image and video model development, object detection and multimodal systems

(sponsored)

A QUICK POLL BEFORE YOU GO

Will humanoid robots have a key part to play in the AI revolution? |

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

“[This image] looked like an iPhone picture, while [the other image] had more depth and detail than I expected.” |

“[This image] had roads that seemed to have no purpose and destination; one offshoot (left side) leads to a plateau. Why? In a desert climate, who would live there without water? Can't farm that plateau.” |

Take The Deep View with you on the go! We’ve got exclusive, in-depth interviews for you on The Deep View: Conversations podcast every Tuesday morning.

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.