- The Deep View

- Posts

- Claude booms, uptime falters, users get new limits

Claude booms, uptime falters, users get new limits

Welcome back. Reddit and Wikipedia are drawing hard lines against AI-generated content while also licensing their data to AI labs to train models. The irony is hard to miss. With new Codex plugins, OpenAI keeps getting more serious about enterprise, pivoting from novelty to utility. And Claude's meteoric growth is hitting real-world limits. Its outages have dropped uptime below 99% and its new peak-hour usage rates amount to surge pricing for chatbots. Growth is great until infrastructure can't keep up. All three stories point to a common trend: AI is maturing fast, and the friction is showing. —Jason Hiner

1. Claude booms, uptime falters, users get new limits

2. Reddit, Wikipedia fight AI while feeding it

3. Codex gets plugins as OpenAI goes enterprise

PRODUCTS

Claude’s rise brings outages and usage limits

Claude is exploding in popularity, and that has consequences.

Anthropic's quirky chatbot has been on a tear during the opening months of 2026. In fact, it's been gaining new users so quickly that it's facing serious growing pains. In recent weeks, Claude has faced a series of outages that have caused it to dip below the 99% uptime standard for most applications.

And because of Claude's growing popularity and the company's difficulty handling the rapid influx of new users, it is now adjusting how users burn through their limits during peak hours. On weekdays between 8:00 am and 2:00 pm ET, users across the Free, Pro, and Max tiers will all hit their session limits faster, an Anthropic engineer explained on X.

He estimated that the changes will impact about 7% of users and recommended that people shift highly intensive background jobs to non-peak hours.

So what does this mean in practice?

It's surge pricing for chatbots: This is essentially the same thing Uber does for ride costs during rush hour. But instead of changing more money, Anthropic is trying to change user behavior to spread out the requests made to Claude.

Most users won't be impacted: If all you do is use Claude to ask questions and help create documents, then this move is good for you, as it will likely increase Claude's uptime and performance for those tasks.

Peak hours cost more per action: Before this change, 1 prompt used 1 unit of your Claude usage budget, and the cost stayed the same all day. Now, during peak hours, 1 prompt might equal 1.5 to 2 units (Anthropic hasn't said what the exact formula will be).

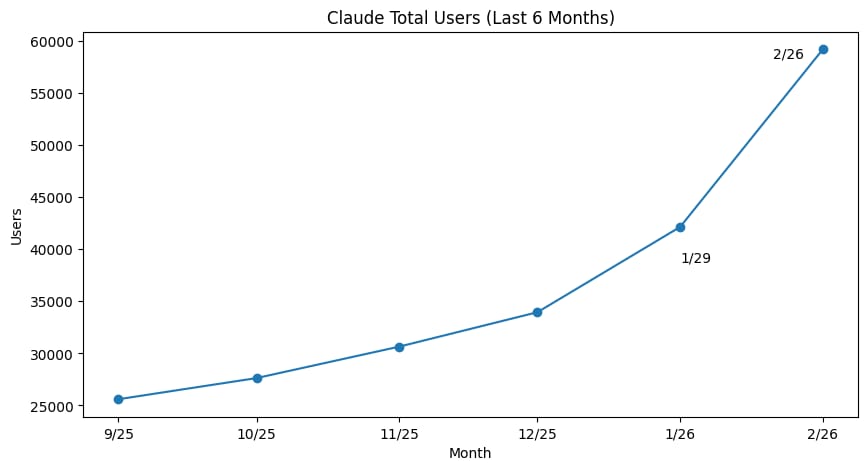

The move doesn't come as a surprise, since Claude's growth has been so meteoric in such a short time. It's no secret how expensive it is to run chatbots and how hard it is for these companies to obtain the GPUs and compute necessary to grow. A report from consumer transactions analysis company Indagari and analyzed by TechCrunch (see chart below), shows Claude's paid users skyrocketed in the first two months of 2026.

Chart credit: TechCrunch

While Claude's growth is impressive, we should also put it in perspective. Anthropic doesn't say how many monthly active users Claude has. Even the most optimistic estimates put the number between 30 million and 180 million. Meanwhile, OpenAI's ChatGPT is at 900 million. So ChatGPT remains 5x to 20x larger than Claude. And while Claude is rapidly onboarding new users, ChatGPT still signs up twice as many new subscribers each week. Nevertheless, since mid-2025, most of the people I know who use generative AI most intensively have been saying they've made Claude their primary chatbot because they perceive it produces higher-quality results. With a lot of people switching from ChatGPT to Claude in recent months, it will be important to see whether they share the same perception and stick around. Many people tend to choose the easier option — including the one that doesn't suffer outages — over the higher-quality one. That still leaves plenty of opportunity for competitors to win over AI users in the months and years ahead.

TOGETHER WITH SLACK

The AI That Already Knows Your Work — Meet the New Slackbot

You may know Slackbot as the familiar helper inside Slack — today, it's something entirely new. Slackbot has been rebuilt for the agentic era as a personal agent that understands your work, your context, and how decisions get made.

Unlike other chatbots, it's grounded in your conversations, files, and workspace context, so it provides support that's deeply personal to you — without asking you to explain yourself or change how you work. With Slackbot, simple conversation leads to real action: organizing information, drafting content, and moving work forward without ever leaving Slack.

In this on-demand webinar, hear from customers like Engine about how they're already using Slackbot, and see a live demo from the team that built it. Walk away with real tactics for bringing personalized AI into your team's daily collaboration.

BIG TECH

Reddit, Wikipedia fight AI while feeding it

Wikipedia and Reddit built their reputations on human-curated content. In the age of AI, both platforms are fighting to stay human-first.

On Wednesday, Reddit CEO Steve Huffman emphasized the company’s mission to keep humans at the center, keeping Reddit a place where “people can talk to people.” In the Reddit post, Huffman included plans to prevent confusion between AI-written and human-written content.

The biggest change is that any accounts that use automation for allowed tasks, also known as “good-bots,” will be clearly labeled as “[App],” signalling to users that they are interacting with a machine instead of a human. Other changes include:

Removal of bots: “Bad bots” or nefarious bot content will continue to be removed before users see it. At the moment, that's about 100K accounts per day, according to Huffman.

Human verification: Reddit may occasionally ask users to verify they're human if content appears AI-generated, though this will be rare and focused on humanity rather than identity.

Reporting: Users will be able to report suspicious content more easily.

Huffman also acknowledged that many people use AI to write, so Reddit isn’t curbing all AI-generated content. Overall, the comments were positive, with Reddit users celebrating that it would remain human first.

Reddit’s announcement followed a similar decree from Wikipedia, which last week enacted a new policy prohibiting the use of large language models to generate or rewrite article content. At most, editors may use it for copyediting or translation.

These changes come as demand for human-created content rises and the lines between authentic and machine-generated content become increasingly blurred.

Reddit's web traffic has continued its upward trajectory, with SimilarWeb data showing approximately 12% year-over-year growth in February. Wikipedia has not seen a similar boost as Reddit in the AI era, with traffic declining 8.8% year over year in February, according to Similiarweb data.

An emphasis on human content could help both platforms yield more immediate gains with readers, according to Joseph Levi, co-founder and CEO of Noise Media, who is exploring how to make brands stand out in the age of AI.

“For Reddit and Wikipedia, cracking down on AI-generated content is ultimately about protecting trust, and trust is what underpins their value in both SEO and AI discovery,” said Levi. “Search engines and AI tools increasingly reward credible, distinctive, human-informed content, so preserving that standard is likely to strengthen their authority over time.”

Both companies currently have deals with AI companies to train on their data, making their positions on AI-generated content all the more nebulous. Wikimedia Enterprise has deals with Amazon, Meta, Google and Perplexity, and Reddit has a long-standing partnership with Google.

Keeping bots off platforms helps preserve their integrity, but there's an obvious paradox: Licensing that same data to AI companies to train their models only fuels the very problem they're trying to solve.

One of AI-generated content's biggest appeals is its ability to deliver exact answers in conversational language, feeding into a natural human desire to do less work, a temptation that's hard to resist. As a result, simply producing high-quality content is often no longer enough to compete, even as more people come to value human-first platforms. To systematically curb the spread of AI-generated content, meaningful limitations on what these models can train on are needed. A logical first step is for platforms to stop willingly surrendering their proprietary content, and we're already seeing publishers moving in that direction. That sets up both legal battles against AI companies and a possible future in which LLMs will have to pay to train data published on the open web. That could put traditional publishers as well as sites such as Wikipedia and Reddit in a stronger position.

TOGETHER WITH MERGE

What hundreds of product and engineering teams told us about building agentic integrations

Hundreds of AI product and engineering teams told us the same three things:

MCP servers are overwhelming their engineering bandwidth

Agent-driven actions are creating security blind spots

They have no clear signal on which integrations to build first

So we went deeper and put together our findings in the 2026 state of agentic integrations report. Here's what the best teams are actually doing about it.

PRODUCTS

Codex gets plugins as OpenAI goes enterprise

Another day, another coding tool upgrade, this time from OpenAI.

On Thursday, OpenAI began rolling out plugins in its agentic coding platform, Codex. OpenAI describes plugins as “installable bundles for reusable Codex workflow” that can contain skills, apps, and MCP servers, with more components coming soon.

Simply put, plugins let users integrate ready-made workflows or app integrations, such as Google Drive, GitHub or Slack, into their Codex workspace. These plugins, created by OpenAI, are available in the Codex directory, but users can also create their own plugins or import them from another ecosystem.

Beyond expanding what users can do in Codex, plugins also streamline processes and make it easier to share setups across projects or teams. These advantages will likely boost developer productivity, but the real appeal is that Claude Code users have had access to this for a while, underscoring OpenAI's recent push to capture a larger enterprise audience.

That push appears tied to a larger goal: preparing for a possible IPO, something CFO Sarah Friar acknowledged directly when pressed on the topic.

“Over the long run, look, we have to build a company that’s ready to be a public company, ” Friar told CNBC.

Part of that effort means cutting costs and leaning into more lucrative opportunities, such as enterprise.

This week, OpenAI announced the shutdown of its Sora generative AI video platform and app, just six months after launch, and, as a result, ended its $1 billion content partnership with Disney. Separately, the Financial Times reported that OpenAI was “indefinitely” putting on hold its ChatGPT “adult mode,” which was supposed to allow users to create erotic content.

Friar added that the enterprise business is “a very profitable business at scale, and that’s how we will build a sustainable business model.” Currently, 60% of OpenAI's revenue comes from consumers and 40% from enterprise, but Friar expects that to reach a 50-50 split by year's end.

Anthropic is the clearest example of the success that comes from enterprise businesses, as the company has focused on enterprise offerings, and as a result, roughly 80% of its revenue comes from enterprises, according to CEO Dario Amodei.

Having closely tracked OpenAI and the broader AI industry since 2022, I find this more focused approach encouraging, as it finally addresses a persistent problem: AI for the sake of AI. The race has occasionally seemed oriented around producing the flashiest developments rather than the most practical ones, prioritizing novelty over utility. OpenAI's new enterprise focus should help ensure these tools are actually solving real problems for real people, which is a win for everyone.

LINKS

Anthropic admits testing a new model much more capable than predecessors

Physical Intelligence is looking to raise $1B in funding at an $11B valuation

Musk considers allocating up to 30% of SpaceX IPO to retail investors

Meta’s Oversight Board says “Community notes” aren’t fact-checking

Anthropic IPO could come as soon as the fourth quarter

OpenAI’s ad pilot surpasses $100 million annualized revenue

Codex: Plugins now allow users to work with popular work apps, such as Slack

Runway: The content-generating platform launched an Ad Concepter App

Search Live: Google’s AI Search feature is now accessible globally

Suno: The AI music platform launched Suno v5.5, with voice cloning and custom models

POLL RESULTS

Do you think Apple can still play a meaningful role in the AI ecosystem?

Yes (77%)

No (21%)

Other (2%)

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

“The shadows of smaller flowers behind the poppy in the other image threw me. I thought they were AI nonsense.” “The background is more realistic.” “More detailed.” |

“The poppy pods in the background convinced me. ” “In [the other image] both the foreground and the background were blurred. It seems to defy the logic of depth of field.” “[The other image] seemed to have a lot of "fuzzy" fill.” |

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.