- The Deep View

- Posts

- Exclusive: How to protect your vibe-coded apps

Exclusive: How to protect your vibe-coded apps

Welcome back. OpenAI is trying to shape the AI policy conversation before regulators shape it for them, backing new safety bills while positioning itself as a steward of “AI for humanity.” But as AI labs gain more influence, the tension between public good and shareholder pressure is only going to intensify. Meanwhile, Anthropic is betting that small businesses could become one of AI’s biggest growth markets and is offering them ready-made agents and workflows. And as vibe coding accelerates software development, Synthesia shared a practical six-step playbook for protecting AI-generated code before increasingly powerful cyber models turn today’s vibe coding shortcuts into tomorrow’s vulnerabilities. —Jason Hiner

1. Exclusive: How to protect your vibe-coded apps

2. OpenAI backs AI safety bills, questions persist

3. Anthropic wants to fill SMB's AI gap with agents

GOVERNANCE

Exclusive: 6-step technique to get Mythos-proofed

With AI coding tools poised to transform the job of a software engineer, the question remains: how trustworthy are these models’ outputs?

While vibe coding is helping engineers be more productive than ever, the code itself isn’t always as secure as it could be. And as models become more powerful, those vulnerabilities can be easily exploited: Data from Experian, published last week, found that 40% of the 5,000 data breaches it handled last year were driven by AI. With AI creating a cybersecurity powder keg, Synthesia decided to do something about it.

On Thursday, the company published a blog post detailing its approach to automating code security reviews, offering a recipe that any company can use and retrofit to generally available models. The company claims this six-step technique achieves cybersecurity performance similar to Anthropic’s Mythos.

To break it down simply:

The first two steps involve prepping the code to identify potential vulnerabilities and mapping every possible entry point for untrusted input.

For the next two steps, the system sends AI agents to hunt for three types of vulnerabilities: injection attacks, authorization issues, and business logic flaws. Duplicate findings are removed, saving tokens by avoiding the need to thoroughly investigate them.

Finally, the AI agents first double-check each vulnerability and then summarize what actually needs to be fixed.

Martin Tschammer, head of security at Synthesia, told The Deep View that this technique is a solution to a problem that the company aimed to tackle internally first. As a software firm, it’s using AI and coding agents to ship products faster than ever.

“Security review in particular depends on careful, deliberate analysis so it's exactly the kind of work that breaks down under velocity pressure,” Tschammer said.

Tschammer also said this system offers several particular advantages. For instance, rather than just understanding general code, applying this to the models you use in your enterprise means they understand your specific codebase.

It also cuts out additional work before reaching your team, and doesn’t require a frontier model to leverage. Plus, at an average of less than $4 per review, it’s an affordable way to prevent vulnerabilities from slipping through the cracks.

“You do not have to wait for Mythos, or some other security-branded model, to become available,” Tschammer said. “In fact, we believe you don’t have the luxury to wait. If you want to stay ahead of the curve, you need to act now.”

With models like Anthropic’s Mythos and OpenAI’s GPT-5.5 offering an unprecedented level of cyber capability, AI’s ability to supercharge both cyberattackers and cyberdefenders has come sharply into focus in recent months. However, vibe coding and AI broadly have empowered companies to slash their workforces and ship faster. But if the increased vulnerabilities and attacks have taught us anything, it’s that speed is the enemy of perfection. With stakeholders pressuring executives for returns, the question remains if the enticing capabilities and promise of this technology will outweigh the security risks that it poses. Will measures like the ones Synthesia is taking be enough to make a difference for your organization? It certainly can't hurt. If you're using vibe coding to build software, you're probably introducing a large number of vulnerabilities as well. So you should be prepared by taking steps like this before Mythos and GPT-5.5 arm your attackers.

TOGETHER WITH GRANOLA

Real Conversations = Rich Context

By now, you almost certainly know how much all of us at The Deep View love Granola, the notetaking app that saves us around 10 hours per week per person. But their latest update, Spaces, is taking that seamless collaboration and documentation to the next level… and we’re experiencing it firsthand.

Essentially a team workspace with folders and chat built in, Spaces uses your conversations to give context to any question your team asks. From sales asking “Why are we losing this deal?” to researchers wondering “What are users consistently asking us for?”, you can ask anything and Granola will read all of the Spaces content to immediately give you an answer.

POLICY

OpenAI backs AI safety bills, questions persist

Amid a murky AI regulatory landscape, OpenAI is making moves.

On Wednesday, the AI giant announced its endorsement of two AI safety bills, the Kids Online Safety Act and SB 315, a bill on frontier AI. In its global affairs blog, the Prompt, the company said that the former aims to create stronger protections for young AI users, while the latter supports a "national framework for frontier AI safety."

Though these bills target different aspects of safety, both aim to strengthen safety requirements and accountability.

The Kids Online Safety Act, or KOSA, sets clear protections for minors' social media use. OpenAI noted that new models necessitate "AI-specific rules," and that the bill is complementary to the work it’s doing at the federal and state level. In the blog, Chief Global Affairs Officer Chris Lehane said, "We can’t repeat the mistakes made during the rise of social media."

SB 315 takes aim at frontier AI models, calling for clear requirements around safety, transparency, incident reporting and accountability for bleeding-edge systems. The Illinois bill follows similar legislation in New York and California, and OpenAI is supporting the bill to advance "a risk-based approach focused on the most capable models and highest-consequence harms."

Beyond its support for these bills, OpenAI also announced the opening of its Washington, DC AI workshop, aiming to provide a space to foster "much-needed conversations about who will really benefit from AI." The workshop will host training and discussions for elected officials, regulators, civil servants, educators, workers, nonprofits, and industry and community leaders to engage with and discuss the technology.

In the blog post, OpenAI said that, rather than being a commodity, intelligence should become a "global utility," akin to electricity, available to people, businesses and institutions "as much as they need, where and when they need it."

"Our job is to make that intelligence cheaper, better, and more abundant over time – not to dominate every vertical ourselves – so that more people can build, solve problems, and expand what they’re able to do," the blog post reads.

AI’s regulatory path is far from set in stone. OpenAI has the ability to impact how that path is shaped by supporting bills early and hosting conversations. While the company says in its post that the aim is not to "dominate every vertical" by becoming a utility, it is undeniable that the company holds an extraordinary amount of power to shape everything from the laws that govern it to the way people use it. And given that the goal of any company in a capitalist system is to increase their bottom line, it’s always important to consider the incentives. OpenAI is especially good at striking the right notes in its rhetoric. We still need to see evidence from OpenAI, and the other leading AI labs, that they have the will to make tough decisions that will support high-minded goals such as "ensuring AGI benefits all of humanity" even when it goes against their economic interests. That's going to become even more challenging when OpenAI, Anthropic, and others become public companies and have to answer to shareholders.

TOGETHER WITH MONGODB

Build Smarter AI Agents

Coding agents are changing how software gets built. But agents are generalists and they don't follow what production systems demand. MongoDB Agent Skills give your coding agent the MongoDB expertise it needs to generate reliable schemas, queries, and code that follow proven best practices.

Ship faster with high-quality, efficient MongoDB code

Stay context-aware using the MongoDB MCP Server

Enforce consistency across solo and team workflows

Available alongside the MongoDB MCP Server in native plugins for Claude Code, Cursor, Gemini CLI, and VS Code.

PRODUCTS

Anthropic wants to fill SMB's AI gap with agents

Artificial intelligence has advanced to the point where it can function as a thought partner, an employee, or an entire team. Small businesses, often stretched for resources, may need that help the most.

On Wednesday, Anthropic launched Claude for Small Business, a new product aimed at helping small businesses more easily take advantage of AI. The tool packages several connectors and workflows that small businesses rely on, allowing small business owners to more easily leverage Claude within them.

Here are the specs:

The launch includes 15 ready-to-run agentic workflows that span several industries, including finance, operations, sales, and HR, as well as 15 skills built on repeatable tasks identified by small business owners.

For now, connected apps include Intuit Quickbooks, PayPal, HubSpot, Canva, Docusign, Google Workspace, and Microsoft 365.

To get started, all users need to do is connect their tools with Claude, Install the Claude for Small Business one-click plug-in, pick a task, and run.

The tools available were clearly chosen to meet existing demands. For instance, Canva, which the company claims is one of the most widely-used apps within Claude, can now more easily offer small business owners enterprise-focused assistance such as building on-brand campaign assets.

Beyond the tools themselves, Anthropic partnered with PayPal to release a free online course on using AI to run a small business, the AI Fluency for Small Business course, which is available on demand today.

Small businesses account for 44% of the US GDP. Still, despite these firms being a prime target for this technology, adoption has lagged behind that of enterprises, according to the blog post. That is a problem that Anthropic seeks to fix with this launch.

“Small businesses make up nearly half the American economy, but they've never had the resources of bigger companies,” Daniela Amodei, co-founder and president of Anthropic, said in the post. “AI is the first technology that can finally close that gap.”

Anthropic is also supporting the Workday Foundation Solopreneurship Accelerator Program, meant to support 15 aspiring solo entrepreneurs with resources, as well as partnering with three Community Development Financial Institutions: the Accion Opportunity Fund, Community Reinvestment Fund USA, and Pacific Community Ventures.

In the recent months, we have seen nearly every AI lab refocus its attention on enterprise, as that is where the profit-making leg stands. That is because these companies have endless budgets dedicated to the AI transformation, banking on ROI in the near future. However, small businesses are even better positioned to take advantage of these tools, as they are actually strapped for resources. In most cases, instead of replacing current employees, AI could actually help fill in gaps that would otherwise remain unfilled for these owners, showcasing real augmentative capabilities of AI.

LINKS

Memory chip shortage due to AI demand widens stock performance

Waymo recalls 3,800 robotaxis after some drove into flooded roadways

Amazon unveils Alexa for Shopping, an agentic AI assistant

Stanford HAI launches lab to study science of AI in the workplace

Mistral is developing an AI model for European banks without Mythos access

Anthropic’s revenue run rate reportedly on track to hit $50 billion by June

Ponder: The startup released an agentic video editor for filmmaking.

Whatsapp Incognito Chat: Users can now talk to its AI models without anyone, including Meta, being able to access their conversations.

Microsoft MDASH: Microsoft has unveiled an agentic vulnerability discovery system, available to enterprises starting in June.

Adaption AutoScientist: The company introduced a new tool that helps models train themselves.

Anthropic: Forward Deployed Engineer, Applied AI

OpenAI: Threat Modeler, Preparedness

Cedars-Sinai: Artificial Intelligence Research Faculty

Applied Intuition: Research Intern - Reinforcement Learning, Robotics

A QUICK POLL BEFORE YOU GO

Do you trust AI model companies to help shape regulation around their technology? |

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

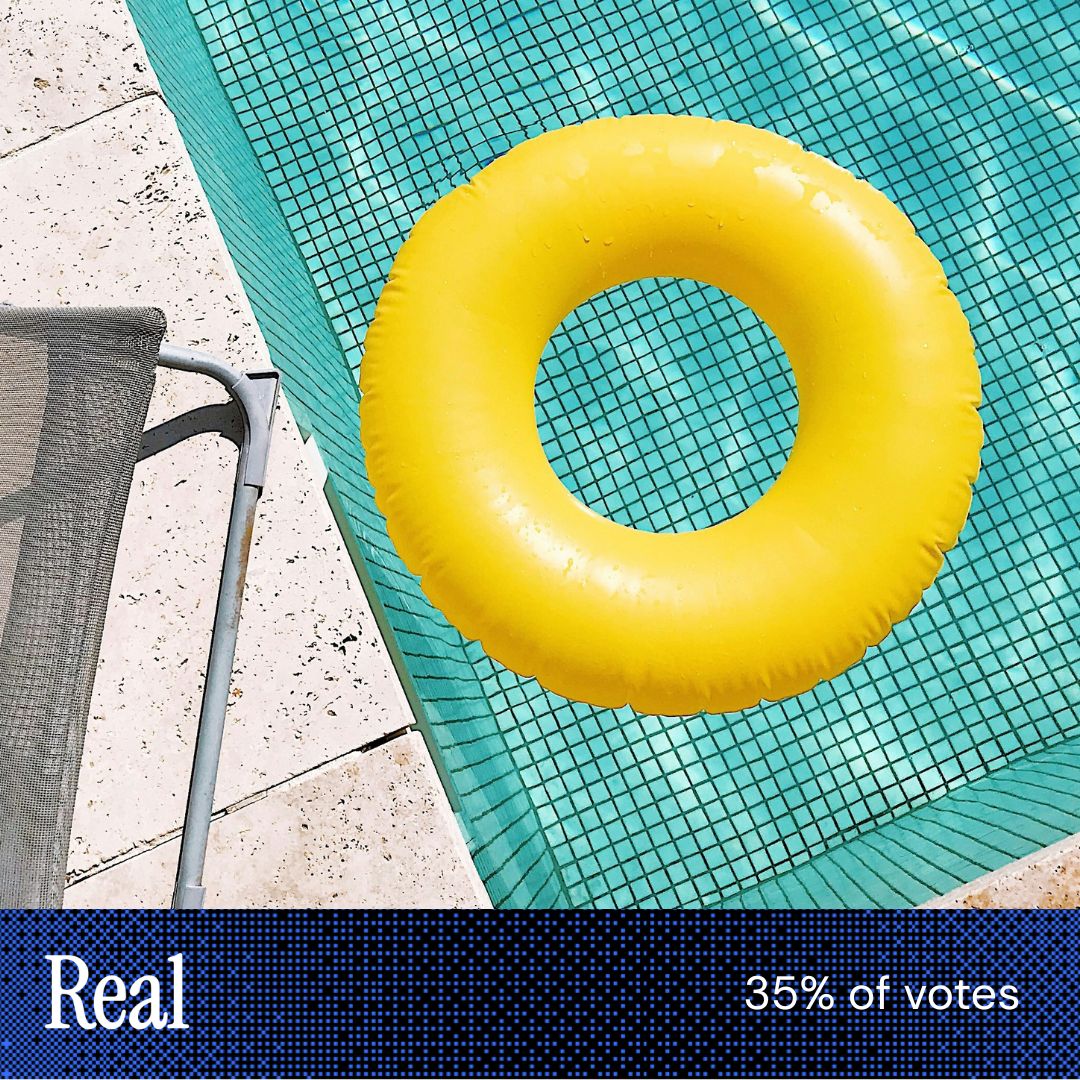

“There are imperfections in [this image] that cannot be replicated by AI.”

|

“[The other image] doesn’t contain as much detail and depth, tiles seem flat, the floating ring doesn't appear to have the ridges we would expect for it to have.” |

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.