- The Deep View

- Posts

- Nvidia chases scale as user-owned AI emerges

Nvidia chases scale as user-owned AI emerges

Welcome back. Coding agents are reshaping work, turning developers into architects and offering an early signal of a broader transformation of white-collar work. Google’s Stitch introduces “vibe design,” pushing AI deeper into the creative process with tools that accelerate, not replace, designers. At Nvidia GTC, a bigger tension emerged. Nvidia is driving a new era of agentic scaling, where more compute fuels more intelligence. At the same time, a decentralized vision of user-owned AI is materializing, aiming to give individuals more control over their data, privacy, and security. The future of AI may hinge on how that balance between centralization and decentralization plays out. —Jason Hiner

1. User-owned AI emerges while Nvidia pushes scale

2. Google introduces 'vibe design' with Stitch

3. Beyond coding, AI's job transformation is coming

BIG TECH

Nvidia chases scale as user-owned AI emerges

At GTC 2026, Nvidia committed to unlocking new levels of compute for the industry's leading innovators. At the same time, another narrative about bottom-up innovation was unfolding in other corners of GTC.

On a quiet veranda at the San Jose Convention Center, The Deep View spoke to Illia Polosukhin, co-founder of Near, about "user-owned AI," where the technology is decentralized, all your data is kept separate from the models, and agents are treated as a secure, separate OS.

While Near doesn't have the marketing muscle of OpenAI, Anthropic, and other leading players in the AI ecosystem, it's counting on the kind of bottom-up, word-of-mouth momentum that turned OpenClaw into a viral hit, aiming to spread the mantra about a safer and more empowered alternative to the way most people are experiencing today's AI.

Meanwhile, Nvidia CEO Jensen Huang emphasized that more compute means more intelligence, more intelligence means more value, and more value means more revenue. Huang told a full capacity audience at the San Jose Civic on Wednesday that “intelligence is directly correlated to the amount of compute that you have.”

Amid the veritable firehose of announcements at the conference, Nvidia posed an entirely new scaling law to continue pushing the narrative of more: Agentic scaling.

Following pretraining scaling, post-training scaling and test-time scaling, this new scaling law involves AI not just talking to humans, but to other AIs, vastly increasing demand for low-latency, large-context inference.

These multi-agent systems, Nvidia claims, will unlock multi-trillion parameter models, turning daylong requests into hours. To do so, however, these systems need to get a lot faster, with Nvidia emphasizing the need to deliver tokens 15 times faster and with 10-times larger models.

“The best open [models] are trillion-parameter models, and … if you look at the proprietary models, they’re more than a trillion parameters,” Kari Briski, VP of generative AI software at Nvidia, told The Deep View. “Now, the fourth scaling law is not just about one reasoning model. It’s about a swarm of agents with subagents. Agents talking to agents.”

Polosukhin is also all-in on agents, including the claw revolution. Near has IronClaw, which offers a more secure version of OpenClaw, similar to NanoClaw and Nvidia's NemoClaw. The company also launched a secure agent marketplace designed to run agents that can offload work and earn money, all within its decentralized, security-first platform.

GTC COVERAGE BROUGHT TO YOU BY IREN

IREN Signs $9.7 Billion Agreement with Microsoft to Deploy AI Cloud Infrastructure

IREN will deliver large scale GPU clusters accommodated within IREN’s liquid cooled data centers under construction at its 750 MW campus located in Childress, Texas.

The GPUs will be deployed in 4 phases through 2026 (Horizon 1-4) and will collectively provide 200MW of critical IT load. Learn more.

It was impressive to see Nvidia racing ahead with all of its vast resources to help scale the exponential growth of the AI ecosystem. Of course, the companies that can most benefit from these upgrades are the ones with the most resources to purchase compute — Google, Microsoft, OpenAI, Anthropic, etc. — and that risks further centralizing the AI industry around a few leading players. Contrast that with the decentralized vision of Near's Polosukhin, a Google researcher who pioneered the transformer that launched the generative AI revolution. Polosukhin speaks emphatically about the risks of centralizing too much power and the importance of having an alternative. Though Nvidia spent the week preaching about the importance of open models and the bottom-up OpenClaw revolution, tacitly acknowledging the need for a balanced AI ecosystem, the inevitable winners of the exponential scaling of compute will be the ones that can pay for it.

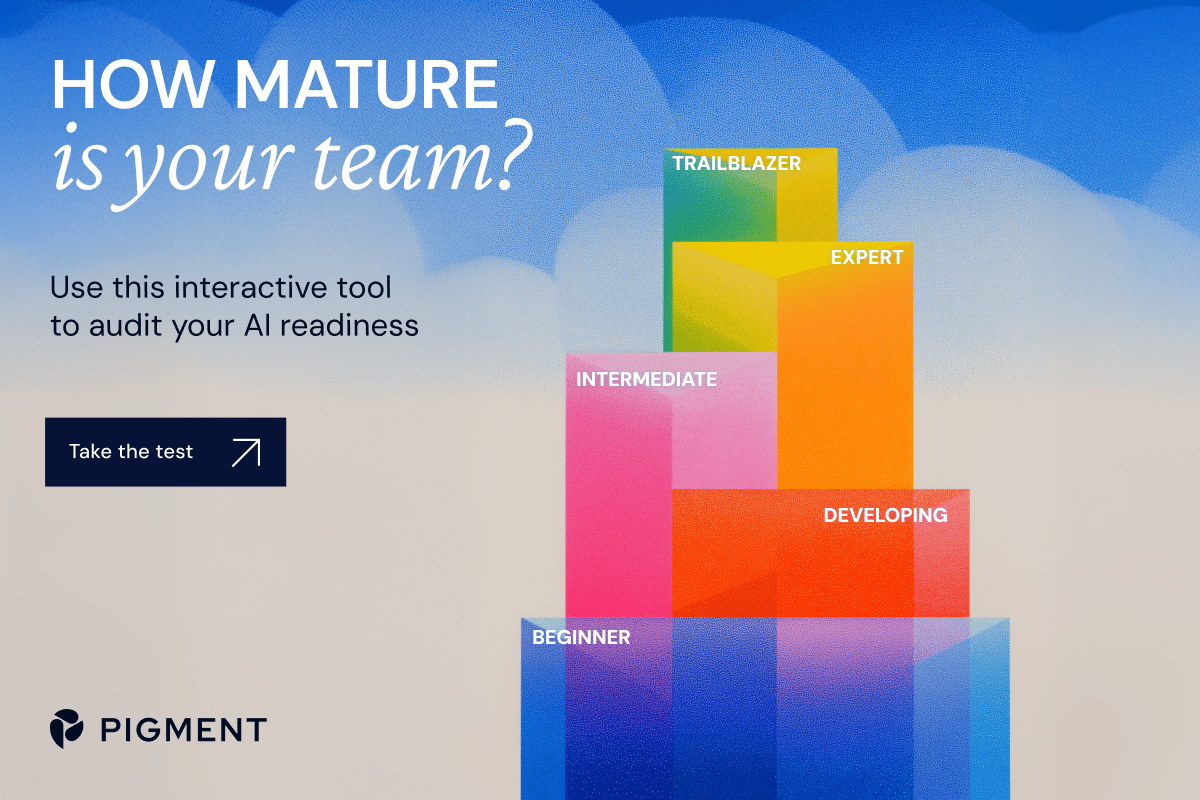

TOGETHER WITH PIGMENT

How AI-Ready Is Your Finance Organization?

When the future of AI and business transformation is now, it’s the perfect moment to ask yourself:

How AI-ready is your finance organization?

Discover it with Pigment’s AI Readiness Assessment - a free interactive tool that highlights your strengths, reveals growth opportunities, and provides a tailored action plan to accelerate your AI journey.

Don’t miss this chance to set your strategy in motion. Take the assessment.

PRODUCTS

Google introduces 'vibe design' with Stitch

You may know vibe coding, but now Google wants you to know about vibe design.

If you can use natural language prompts to build complex websites and apps, doing the same for design should be no different, and that's exactly what Google's Stitch sets out to do. The redesigned tool, launched in beta on Tuesday, lets users of all experience levels collaborate with AI to create and iterate on software designs.

The Stitch tool is intended to let users skip the initial, tedious steps of creating wireframes or rough outlines that map out the layout, and jump straight into building websites and apps with UIs focused on users’ goals and inspirations.

While Stitch was originally launched in May 2025 and launched in Google Labs in December 2025, the redesign has new tools and features meant to make it a better experience for users, including:

New UI: Stitch was completely redesigned to be an AI-native infinite canvas (similar to Figma)

Design Agent: The agent can reason across the entire project’s evolution

Agent manager: The manager can help users track their progress, stay organized, and run multiple tasks in parallel

DESIGN.md: A new agent-friendly markdown file that can be used to export or import design rules to other design and coding tools

Voice capabilities: Users can speak directly to the canvas to provide feedback or create new elements

While Stitch can do a lot for users of all experience levels, The Deep View’s designer, Lucas Crespo, does not think it is a tool that sets out to replace designers, but rather helps them get things done sooner.

“To me, it feels closer to downloading a Figma UI kit, or starting from a hyper-custom template,” said Crespo. “Very useful when you are in a rush, very useful for a first draft, but not a substitute for knowing what to do with it if you have a high bar as a designer and want to do differentiated stuff.”

With the platform, users can also integrate their own images, text, or code into the project. Then, they can “Stitch” screens together and click “Play” to view the app workflow, and the platform can even generate the next logical screens so users can see the entire journey mapped out.

Lastly, through the Stitch MCP server and SDK, Stitch can also be implemented into other tools and skills. Stitch is available to all users for free via Google Labs. It is an intuitive process that I tried for myself as someone with barely any background in website or app design, and I had a lot of fun.

It's refreshing to see a generative AI tool built for creatives that augments the creative process rather than replacing it. Unlike AI image generators, it helps you find inspiration or build on an existing foundation. It's also a natural next step after vibe coding. Where vibe coding brings an app from concept to tangible form, Stitch helps users visualize the entire design process, ensuring it aligns with their vision and delivers a better user experience.

TOGETHER WITH TWELVELABS

There's A New Breakthrough In Video Embeddings

Traditional multimodal video embedding models collapse visual, audio, and speech into a single vector. That simplifies implementation, but it's terrible for searching. Dialogue gets diluted by background sounds, a sound-event query gets buried by visual similarity, and you're stuck unable to find the stuff you actually need.

The good news is, TwelveLabs has addressed this with their video embedding model: Marengo. Marengo outputs separate embeddings for visual content, audio, and transcription, which means you can adjust based on your search needs... and that opens up a whole new world of possibilities. Check out their free whitepaper to explore two architectures for building on that separation, plus learn how to route queries to the right signal without locking in a decision you can't undo.

WORKFORCE

Beyond coding, AI's job transformation is coming

AI coding tools look more and more like the canary in the coal mine for an agentic revolution of all forms of knowledge work.

It’s clear that AI coding tools have largely shifted the jobs of software engineers. The capabilities of tools like Claude Code, OpenAI’s Codex, Cursor and more have alleviated the burden of writing code by hand. As such, developers are becoming “product engineers,” rather than software engineers, Kari Briski, VP of generative AI software at Nvidia, told The Deep View. “You're more of an architect now, rather than just a typer.”

These tools have shifted the tide so much that practically anyone can code, from complete novices to hardcore engineers. One tech executive told us how her 12-year-old daughter is using Replit to build an app to track her sports performance and provide tips to improve her technique.

But in a panel on Wednesday at Nvidia GTC, CEO Jensen Huang told an audience of hundreds of attendees in San Jose on Wednesday that “almost all work can be specified as code.” And the panelists, all members of the company’s newly-launched Nemotron Coalition, agreed that coding may just be the guinea pig for sweeping automation.

Aravind Srinivas, CEO of Perplexity, said that a model that was trained originally to be stellar at coding “is suddenly able to do all kinds of knowledge work.”

Michael Truell, CEO of Cursor, meanwhile, said that last year’s “economy-wide story” was the widespread adoption of coding agents. “This year [it's] going to be [about how] what started working in coding last year is going to work in all of these other domains.”

Misha Laskin, CEO of ReflectionAI, noted that coding and enterprise automation are just the “first generation” of problems that we can solve, but because these models are “mechanical brains with endless capacity to learn,” we’ll get to a point where solving fundamental problems becomes a matter of allocating economic resources, rather than scientific barriers.

The power that these coding tools represent has led to a fierce battle over the hearts and minds of AI builders. And a clear winner is emerging: At GTC this week, we did a straw poll among the people we met from across the AI ecosystem, asking them which AI coding tools they use. Remarkably, almost everyone we talked to now uses one, regardless of their level of technical expertise. Overwhelmingly, Claude Code was the most-used tool, mentioned by more than three-fourths of the people. Next was Codex and Cursor. Several people use multiple tools, and among those, most of them use Claude Code and another tool.

The fight to come out on top in AI coding is about more than just speeding up the developer workflow. If AI coding tools are a gateway to a larger transformation of knowledge work, then whoever wins over software engineers may win over white-collar industries broadly. According to Ramp data from March, Anthropic already has a massive lead among enterprises, capturing more than 73% of enterprise spending among first-time AI buyers. And with its existing popularity among developers, the company may have an edge in what could be a major metamorphosis of the way we work in the months and years ahead.

LINKS

EU lawmakers support a ban on AI apps that create unauthorised sexually explicit images

The Trump Administration asserted that it did not violate Anthropic’s First Amendment rights by designating it a supply chain risk in a court filing

Nvidia is reportedly preparing a version of its Groq chips that can be sold to the Chinese market

Apple blocks updates to “vibe coding” apps such as Replit and Vibecode

In a preliminary agreement, Samsung agrees to collaborate with AMD on AI memory solutions

Microsoft considers legal action against Amazon and OpenAI cloud deal

People speculate that a new free model on OpenRouter, Hunter Alpha, is actually DeepSeek’s latest model

Claude Cowork: Dispatch, a new feature in research preview, allows users to run a conversation on the computer while messaging it from their phone.

Personal Intelligence: Google shipped Personal Intelligence in AI Mode in Search to everyone in the US, accessible via Search, the Gemini app and Gemini in Chrome

Codex: OpenAI’s coding agent has subagents or specialized agents that can tackle tasks in parallel to the main agent.

Midjourney: The AI content-generating platform is testing an early version of its V8 model with users. It's meant to be faster, offer better prompt adherence, and more

Deloitte: Google AI Architect

Disney Entertainment and ESPN: Sr. Product Manager, Ads AI/ML

Cognizant: Assistant Vice President, AI Training Data Services SME

JPMorgan Chase: Product Manager, Data and AI

A QUICK POLL BEFORE YOU GO

Have you tried any AI agents yet? |

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

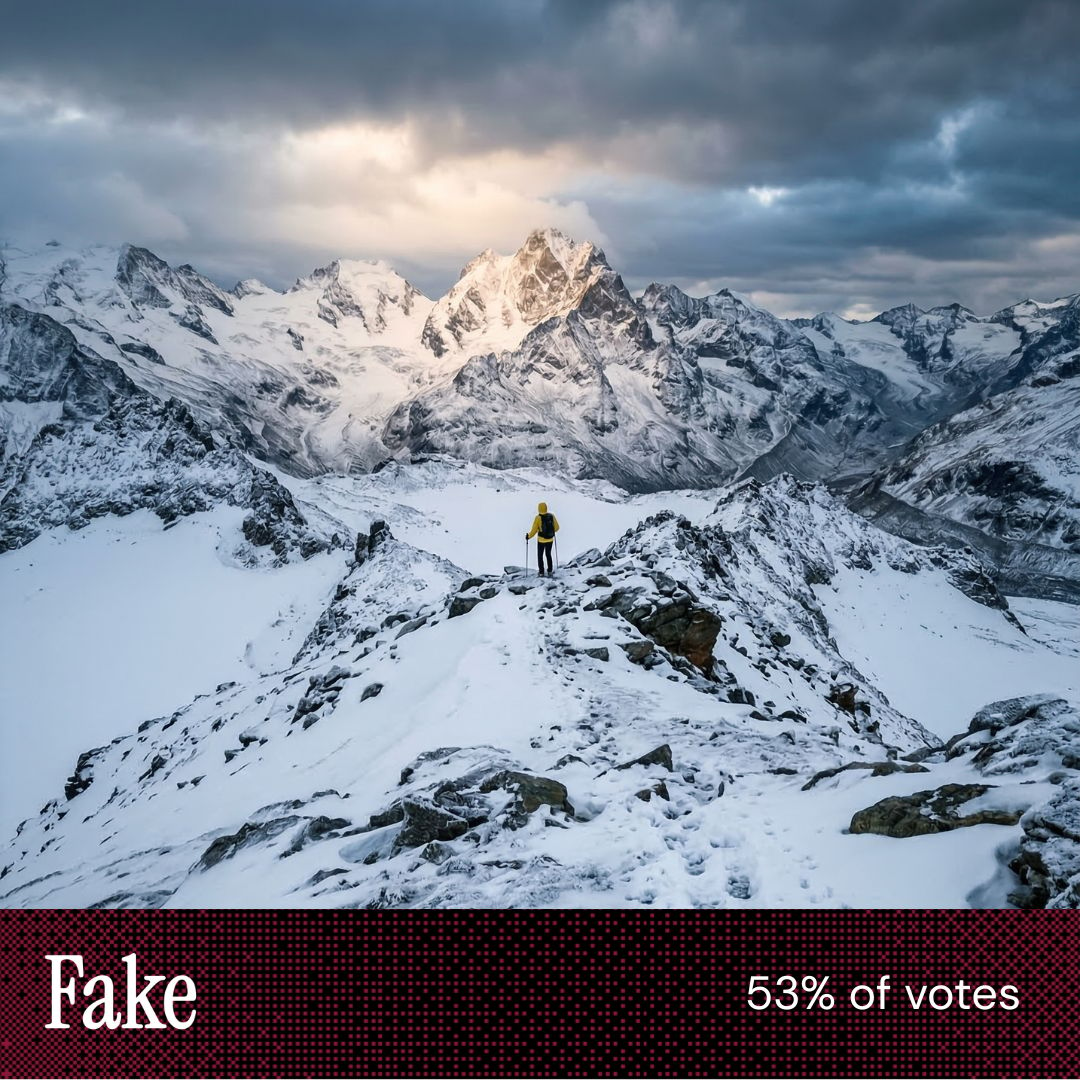

“More detailed photo and realistic proportions of human to nature.” “Duller, less vibrant. Selected the unintuitive pic.” “The sun coming through the clouds in the other image was too perfectly centered on the peak.” |

“[This image] shows unnatural footprints [to] the side of the human ones.” “[This image] is way too explicit and formed. Perfect lines that don’t exist in real nature.” “I've been asking myself, ‘who took this photo?’ Someone? Or ‘No one’. I've had more success.” |

Take The Deep View with you on the go! We’ve got exclusive, in-depth interviews for you on The Deep View: Conversations podcast every Tuesday morning.

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.