- The Deep View

- Posts

- Nvidia unveils new plans for robots and space

Nvidia unveils new plans for robots and space

Welcome back. Nvidia announced several new bets on Monday at GTC 2026. In space, it's pushing AI compute to the edge, enabling orbital hardware to process data in real time instead of sending it back to Earth. On the ground, it's positioning itself in the center of the robotics era, building the full stack for physical AI without making its own robots. Meanwhile, OpenAI is sharpening its enterprise strategy, working with private equity firms to embed its technology across large portfolios of companies. It's also pulling back on "side quests" and doubling down on AI that can help professionals do work. Look for more Nvidia GTC coverage tomorrow as our team meets with more of the AI builders who are driving the industry forward. —Jason Hiner

1. Nvidia builds the tech stack for the robotics era

2. Nvidia unveils a different vision of AI-in-space

3. OpenAI expands its enterprise AI plans

BIG TECH

Nvidia builds the tech stack for the robotics era

Nvidia is betting on robots, without actually building any robots.

On Monday, CEO Jensen Huang unveiled a flood of physical AI offerings and partnerships, spanning self-driving cars, world models, humanoids and data itself, in his keynote at the company’s annual GTC conference in San Jose. The headline? The ChatGPT moment for physical and embodied AI is here, and Nvidia wants to build its foundation.

“Physical AI has arrived — every industrial company will become a robotics company,” Huang said. “NVIDIA’s full-stack platform … is the foundation for the robotics industry.”

Here are the highlights:

Autonomous vehicles: Nvidia announced Alpamayo 1.5, the latest iteration of the company’s platform built for “reasoning-based” autonomous vehicles. Nvidia debuted the original Alpamayo at CES in January, and since its launch, it has seen 150,000 downloads since its debut. Nvidia also unveiled Halos OS, a “safety foundation” for self-driving vehicles. Additionally, Nvidia announced a partnership with Uber to deploy its technology in robotaxis in 28 markets by 2028.

Data pipelines: The company unveiled the Physical AI Data Factory Blueprint, its fix to the biggest bottleneck facing physical AI development. This is an architecture that automates the generation and evaluation of training data, using its Cosmos world models and coding agents to broaden and diversify limited datasets. It’s already in use by several physical AI developers, including Hexagon Robotics, Skild AI and Uber.

Robotics: Nvidia released editions to its Cosmos world models, Isaac simulation framework and GR00T humanoid models. It also announced partnerships with more than a dozen robotics and physical AI companies, including ABB Robotics, Figure and World Labs, to support “production-scale physical AI.”

This isn’t the first time in recent history that Huang has proclaimed AI’s future lies in the real world. After a CES keynote where he was flanked by small robots that looked like they came straight out of WALL-E, Huang told reporters that the speed of development could lead to robots with human-level capabilities as soon as “next year.” Now, Nvidia is putting its money where its mouth is.

GTC COVERAGE BROUGHT TO YOU BY IREN

Unleashing NVIDIA Blackwell Performance on IREN Cloud™

IREN’s Prince George data center campus is capable of housing over 20k NVIDIA Blackwell and Blackwell Ultra GPUs, and they are constructing a new 10 MW (IT load) liquid-cooled data center to support NVIDIA GB300 NVL72 deployments, capable of handling more than 4.5k GPUs.

Supported by IREN’s vertically integrated platform, this installation is part of a broader 4.5 GW power portfolio, offering customers a scalable environment, optimized to run the most complex AI models with confidence. Learn more.

It’s hard to build a robot. Building the actual hardware that powers physical AI is resource-intensive, time-consuming and expensive. So Nvidia is doing everything but actually building them. This is a wise approach in a still-nascent market that's heavy on potential and light on products. Along with reducing upfront costs and risk, by providing the foundation for roboticists to build robots, Nvidia is making itself a foundational part of the ecosystem. Rather than competing with other physical AI hardware firms, the company positions itself as the provider of a necessary service for those firms to thrive. And of course, it's Nvidia. It positioned itself in the center of the large language model revolution, and now it’s looking to do the same for AI's next stage.

TOGETHER WITH AIRIA

Want To Give Every Employee The Power of AI?

And, more importantly, want to do it safely and securely? Then you don’t need just any AI platform… you need Airia.

Airia is the enterprise AI platform built to offer organizations the best of both worlds – access to the kind of no-code, low-code, and pro-code tools that boost productivity and innovation for your team, while also maintaining the security you need to ensure compliance. The kicker? You can manage all of these features from one central hub, offering simple, seamless control. It’s the perfect platform to future-proof your enterprise with the power of AI… without ever having to worry about any of the security concerns that keep the rest of us up at night.

HARDWARE

Nvidia unveils a different vision of AI-in-space

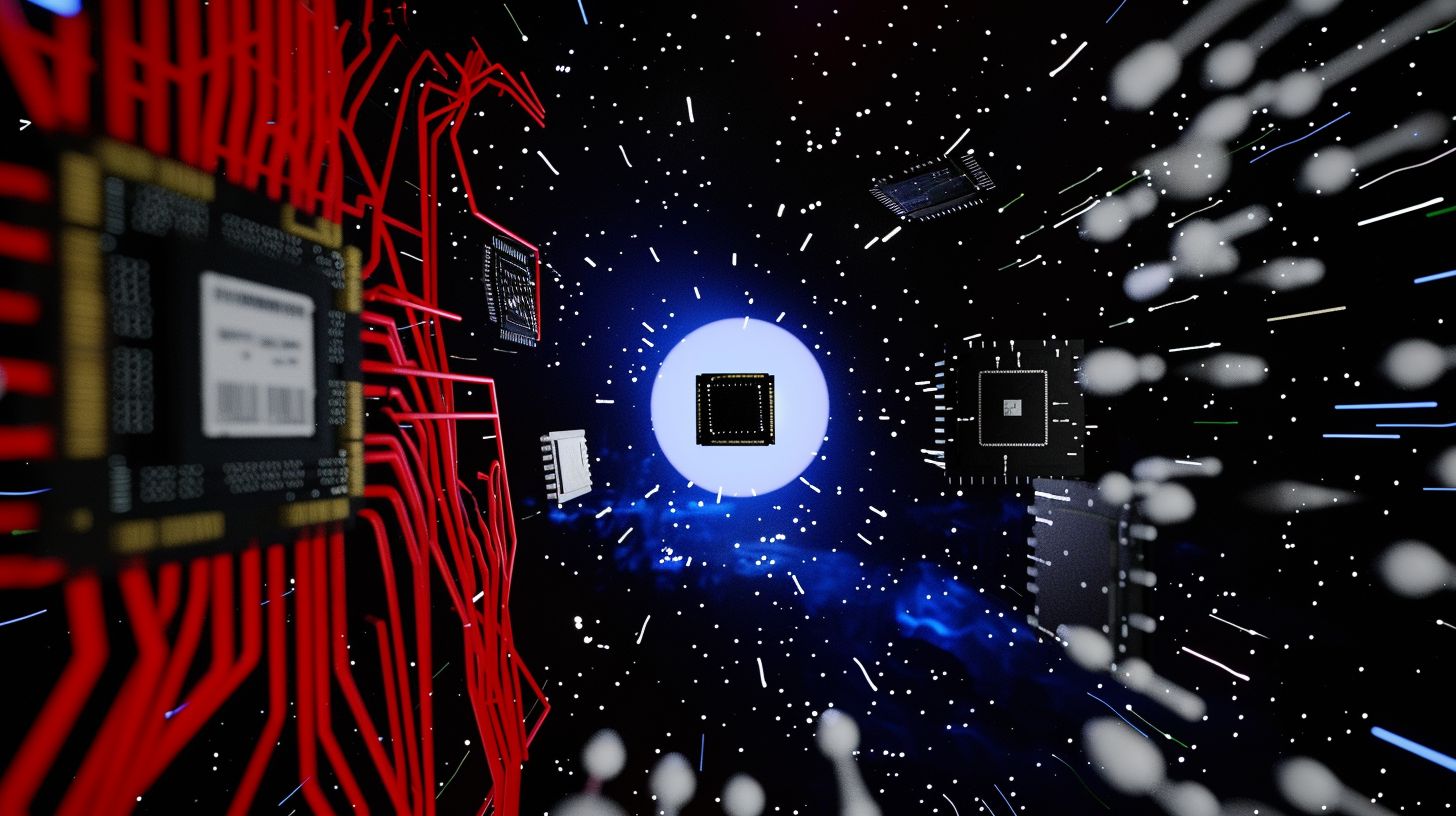

You may have heard of data centers on the ground, but now Nvidia chips are bringing them to space.

At Nvidia GTC on Monday, the company unveiled its first space computing solutions, which, according to Nvidia, will usher in a new era of space innovation by bringing AI compute to orbital data centers (ODCs), geospatial intelligence, and autonomous space operations.

Nvidia joins Google and SpaceX, which have unveiled plans for putting datacenters and AI factories in space. In fact, SpaceX became the largest private company in the world by acquiring xAI and using space-based data centers as a major synergy of the deal. However, Nvidia is doing something different. It's enabling space-based devices, such as satellites and spacecraft, to process AI workloads in real time without sending the data back to earth-based AI factories.

“AI processing across space and ground systems enables real-time sensing, decision-making and autonomy, transforming orbital data centers into instruments of discovery and spacecraft into self-navigating systems,” said Nvidia CEO Jensen Huang.

While Nvidia’s AI-powered space infrastructure includes existing technologies such as the IGX Thor & Jetson Orin, the Nvidia Vera Rubin Space Module is the latest in Nvidia’s accelerated platforms for space. Highlights include:

Up to 25x more AI compute for space-based inference than the Nvidia H100 GPU

Large language models and advanced foundation models operate directly in space

Tightly integrated CPU-GPU architecture and high-bandwidth interconnect for processing of a massive data stream from space in real-time

Space is a challenging environment for electronics due to constraints on size, weight, and power (SWaP), requiring systems to be small, lightweight, and to consume minimal power. Nvidia’s infrastructure was designed to meet those specific needs. Space companies, including Aetherflux, Axiom Space, Kepler Communications, Planet, Sophia Space, and Starcloud, are already using Nvidia's platforms for their missions.

Unlike traditional satellites that beam raw data back to Earth for processing, space computing enables real-time on-orbit analysis. This is critical for disaster monitoring, environmental hazard tracking, and autonomous operations that don't require waiting on human input from mission control.

In the post, Huang says, "intelligence must live wherever data is generated," which brings up a really interesting point. The benefits of processing data with AI on the edge are clear, as there has been a strong emphasis on running AI models on the edge for personal and organizational use cases since AI became mainstream. Local processing unlocks new levels of speed, reliability, security, and more. Now, if you apply that logic to everything, the number of workflows and infrastructure that will need to change is vast, with the most visceral example being something as complex and far-reaching as space.

TOGETHER WITH SLACK

How to Get More Out of Microsoft 365 with Slack

Ready to unlock the full potential of your Microsoft 365 investment?

Watch our on-demand webinar to see how Slack's open connected platform turns scattered tools into a cohesive, AI-powered ecosystem.

You'll learn strategies for seamless Microsoft 365 integration and see real-world examples of workflow automation in action. Plus, discover how to create an adaptable foundation that scales with emerging technologies and positions your business for future innovation.

ENTERPRISE

OpenAI expands its enterprise AI plans

OpenAI is pushing deeper into enterprise adoption, now courting private equity firms.

On Monday, a Reuters report revealed that OpenAI is in talks with private equity firms TPG, Bain, Brookfield, and Advent to form a joint venture with a pre-money valuation of $10 billion, citing people close to the matter.

Shortly after the news broke, Fidji Simo, CEO of Applications at OpenAI, confirmed the news, saying OpenAI is “excited to be building a deployment arm and will share more details soon.” The benefits for OpenAI include capturing more enterprise clients, typically a sector in which OpenAI has lagged behind competitors.

In an interview with The Wall Street Journal, Simo said that OpenAI was reducing its "side quests" and experimental projects and dedicating more resources to coding and working with enterprise businesses.

In her X post, Simo said the new deployment arm has been in the works since December and focuses on embedding engineers directly within enterprise clients to enable closer collaboration. The Reuters report provided more information on how the deal would operate, including:

The private equity investors commit $4 billion in return for equity stakes, a say in how OpenAI’s solutions are deployed across its portfolio companies, board seats and early access to OpenAI’s enterprise tools.

TPG would commit the most capital, serving as the anchor investor, while Advent, Bain, and Brookfield would be co-founding investors.

The firms would get an equity stake of approximately $1 billion, though the source told Reuters the numbers and plan details were subject to change.

“This is essentially part of running a sales motion. It will help them [OpenAI] sell more of their services/tokens,” said Eric Compton, director of equity research, technology at Morningstar.

Beyond the benefits listed in the deals, private equity firms also have strategic reasons to participate.

“They are 100% motivated to 'not fall behind' and risk not having successful exits for their assets, so having a direct seat at the table in this process will help further that goal in their eyes,” added Compton.

Simo highlighted the urgency and demand for AI solutions among enterprises, noting that nearly 1 million businesses are running on OpenAI products, with API usage jumping 20% in the week after GPT-5.4 launched. Furthermore, she said, Frontier, the platform OpenAI launched last month to help enterprises manage AI coworkers, is having more demand than the company can handle.

Portfolios of private equity firms at the scale of the ones above typically hold hundreds of companies each, all of which rely on the firms for decision-making, including software investments. Targeting these firms is therefore a sound strategic move for AI companies, as it guarantees the deployment of their technology across many companies, and OpenAI is not the only one doing so. Last week, The Information reported Anthropic is in talks with Blackstone and other private equity firms to form a similar deal. The timelines and favorable terms of these deals could shake up which companies lead in the enterprise space.

LINKS

Startup backed by Nvidia, Reflection AI invests billions on Korea data center

Meta agreed to spend $27 billion on Nebius AI architecture in a new deal

Sam Altman told college sophomores they will graduate into a world with AGI

Google is “not ruling them [ads] out” of Gemini, according to Wired

Mistral introduces Leanstral, an open-source agent designed for Lean 4

Amazon, Nvidia partner on Alexa Custom Assistant for in-vehicle experiences

Manus: Meta’s general AI agent launched My Computer, allowing it to run on your local machine.

Perplexity Computer: The agentic platform has now rolled out to all Android users.

Kling AI: The AI video-generating platform made its Kling AI Team Plan available on the desktop app and web.

LTX Studio: The AI creative suite for video production now supports translation in 175 languages, with realistic lip sync, for LTX Studio for Enterprise.

Claude: Anthropic is doubling the usage outside peak hours for all users for the next two weeks as a thank-you.

JP Morgan Chase: Product Manager, Data and AI

Handshake: LMMS Specialist - AI Trainer

Capgemini: V&V Engineer - AI-Driven Testing & Validation

FedEx: Senior Solutions Architect

A QUICK POLL BEFORE YOU GO

Is putting AI data centers in space a good idea or a bad idea? |

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

“Ears are in [the] correct direction.” “The varying and confused focus on the overlapping layers of grass looked more real to me.” “The facial imperfections in [this image] are not something AI would come up with.” |

“The left leg in [this image] was bent in an unusual way.” “The grass in [this image] was monoculture, lion’s whiskers perfect, lighting too adjusted.” “Both were plausible but the just perfect cinematic composition and golden hour lighting on [this image], with the slightly soft fur, made me question it.” |

Take The Deep View with you on the go! We’ve got exclusive, in-depth interviews for you on The Deep View: Conversations podcast every Tuesday morning.

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.