- The Deep View

- Posts

- OpenAI's 'superapp' signals the agentic future

OpenAI's 'superapp' signals the agentic future

Hello, friends. Ray Kurzweil's insistence that AGI remains on track for 2029 is a reminder that AI's trajectory still carries both conviction and consequence. At the same time, Stanford’s latest data shows that AI's growth is outpacing trust, with adoption surging while policy, safety, and public sentiment lag behind. Against that backdrop, OpenAI’s coming superapp points to where this is all headed: a unified, agent-driven tool for knowledge work. Merging browser-based multitasking with agentic automation will signal a shift from apps we use to systems that act, bringing the OpenClaw model to the mainstream. —Jason Hiner

1. OpenAI's 'superapp' signals the agentic future

2. Why Kurzweil still sees AGI coming by 2029

3. AI’s surge is widening gaps in trust and policy

PRODUCTS

OpenAI 'superapp' prepares for agentic leap

OpenAI's forthcoming "superapp" is one of the worst-kept secrets in AI right now.

During a walk in San Francisco last month, I told The Deep View CEO, Faris Kojok, that the most challenging part of using OpenAI's products was switching between the three desktop apps. Apparently, I wasn't the only one thinking that, because less than a week later, reports began circulating that the company would merge the three apps into a single superapp.

Since then, the OpenAI team has been publicly discussing it on Twitter and in podcasts. The most prominent of which was when President Greg Brockman appeared on the Big Technology Podcast and told Alex Kantrowitz when and how the new superapp would be rolled out.

"We're taking incremental steps to get there. Over the next couple months, we should have shipped the complete vision … but it's going to come in pieces," said Brockman. "The place that we're starting today is with the Codex app, which is really two things in one. It's a general agent harness that knows how to use tools, and it's also an agent that knows how to write software. That general agent harness can be used for so many different things… So we're going to make the Codex app so much more usable for general knowledge work."

Why would OpenAI bring the three apps together? First, let's look at what they do.

ChatGPT desktop app: This is the traditional ChatGPT app that has been around for the past several years. It makes it easy to view and search all of your past chats and organize them into projects. You can also quickly search for custom GPTs and apps to extend the chatbot's functionality by adding features from tools like Photoshop, Canva, insurance apps, and hiring apps.

Atlas web browser: In October 2025, OpenAI launched the ChatGPT Atlas web browser. Following in the footsteps of Perplexity Comet, launched in mid-2025, OpenAI built its browser on Google's open-source Chromium platform, which runs most of the web. Very quickly, Atlas became my preferred way to use ChatGPT for one simple reason: its ability to open multiple tabs, keeping several different queries quickly accessible.

Codex app: As Brockman noted, the app has two parts. Most people think of it as a coding tool. But the second part is a general-purpose agent that can carry out tasks for you. And that's where the combination of a web browser and an AI agent gets interesting. If you can open multiple instances of ChatGPT in tabs, then you can use your Codex agent to carry out various tasks, all at the same time.

The concept of a superapp has been a common refrain in the tech industry for years. The first successful one was China's WeChat app, which combined text messaging, video calling, file sharing, digital payments, and mini apps that add ride-hailing, food delivery, and event ticketing. That's what Elon Musk wanted to create when he bought Twitter. But what OpenAI is doing is a little different: it's not on mobile, but on the computer, where knowledge workers do almost all their work. When the superapp combines the search and organizing capabilities of the traditional ChatGPT app with the multitasking environment of Atlas and the agentic automation of Codex, it could transform ChatGPT into a product much more akin to OpenClaw—but accessible to its 900 million users.

TOGETHER WITH CRUSOE

Your model. Our inference engine. Breakthrough performance.

Eliminate the "memory wall" causing inference bottlenecks. Crusoe’s inference engine is powered by MemoryAlloy™ technology to deliver larger shared memory capacity so you can serve more users at lower latency, with better throughput, and less wasted compute.

The result? Breakthrough time-to-first-token speed and up to 5x higher throughput. Work with our team to optimize performance for your own fine-tuned model so you can scale without compromise.

CULTURE

Why Kurzweil still sees AGI coming by 2029

Last week's HumanX event offered no shortage of meaningful AI conversations, whether in panels, one-on-one meetings, or casual exchanges. One conversation, however, stayed with me.

Ray Kurzweil is an inventor, futurist, and one of the most consequential figures in the history of AI. His pioneering work on OCR and text-to-speech laid critical groundwork for the AI revolution unfolding today, and his books, including The Age of Intelligent Machines and The Singularity Is Near, have shaped how generations of thinkers and builders understand where technology is headed.

He has also had a pretty remarkable track record of accurately predicting where technology will go next, and one prediction in particular has never stopped turning heads: in 1999, he predicted that human-level intelligence, what we now call AGI, would arrive by 2029.

In a panel discussion alongside his son Ethan Kurzweil, co-founder and managing partner of the venture firm Chemistry, and Shirin Ghaffary, AI reporter at Bloomberg, Ray shared further insight into where his prediction stands today and made clear that he still believes it will come to pass.

“I predicted that there would be a several-yearfold increase where people would start to face AGI, not everybody would, and everybody would face AGI a few years later, so that's consistent with that prediction that we would start now,” said Ray.

Broadly speaking, AGI refers to artificial intelligence capable of understanding, learning, and reasoning at or beyond a human level. It's a prospect that has prompted serious concern across many quarters, from questions of job stability and the potential for weaponization, to deeper anxieties about humanity's role in a world shaped by that kind of intelligence.

Ray, however, holds a far more optimistic view. During the panel, he argued that the positive outcomes of AI will ultimately outweigh the negative, and that nearly every industry, including housing and food, will be transformed through AI breakthroughs.

When asked about the rapid pace of discovery driven by fierce competition in the space, he welcomed that dynamic as well, noting that competition fuels exponential growth and that it is precisely that competition that will make the next ten years 'absolutely fantastic.'

“Even though some people hold a negative premise about [AGI], we’ve been able to create something that actually represents knowledge, and that's really fantastic: AI that can expand our knowledge,” added Ray.

During the panel, Ray drew on his 63 years of experience in the field to audible applause from the audience. That depth of history is precisely what lends his perspective such weight, particularly given that he predicted AGI long before it became a marketing term wielded by industry leaders to generate headlines, move products, or court investors with visions of a grander future. Whatever your stance on the term itself, his core argument, that AI will break through every industry, is already proving true and shows no sign of slowing.

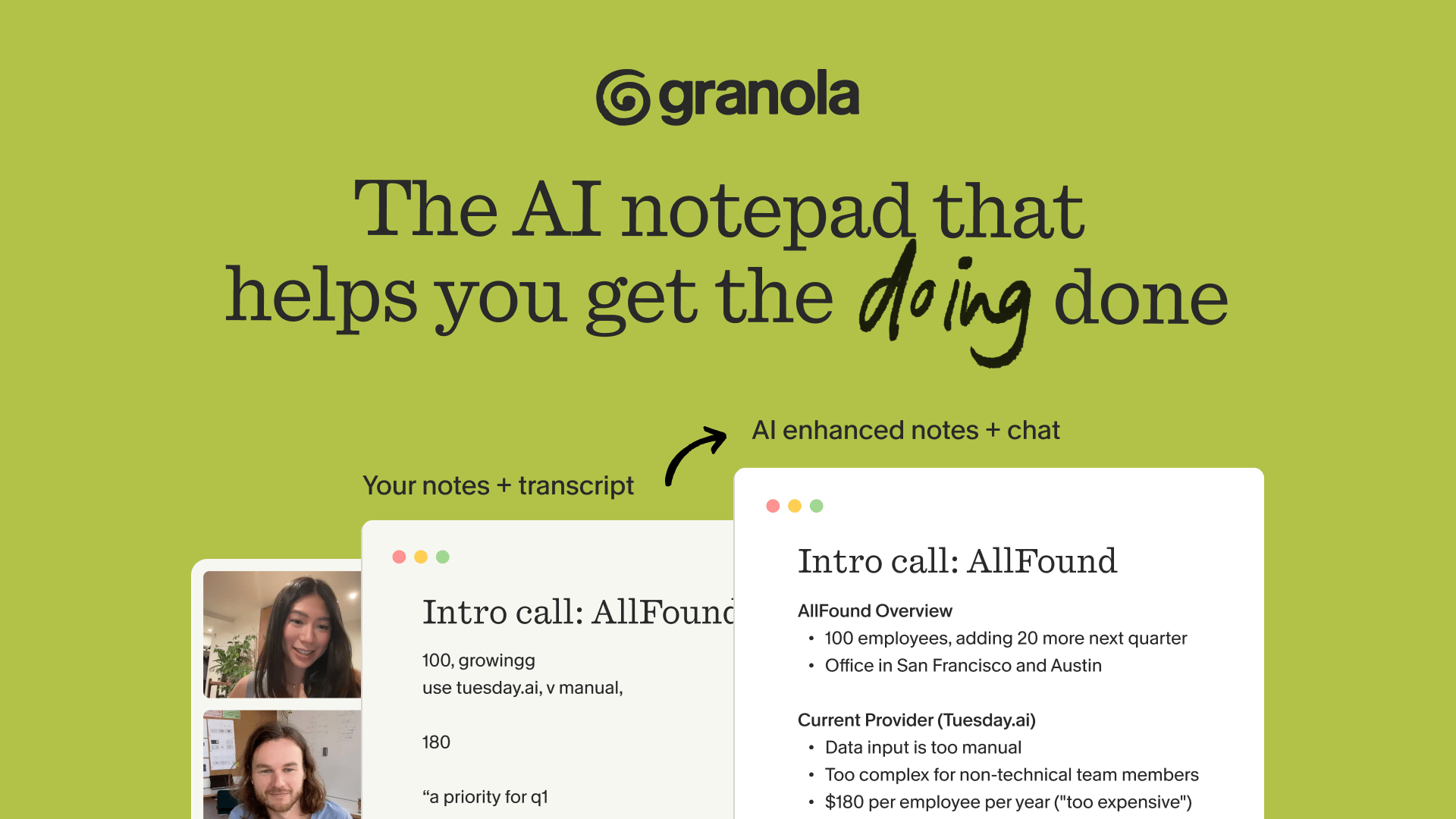

TOGETHER WITH GRANOLA

The Deep View Team is Obsessed with Granola

We’re not talking about the food (although a few of us do have an unhealthy interest in that, too). We’re talking about the AI notepad that turns your chicken scratch into something elegant, organized, and downright beautiful.

Since day one, Granola has checked all the boxes we look for in a notetaking app, like…

Working seamlessly across our various teams and workflows ✅

Documenting, summarizing, and prioritizing all our discussions, so we can focus on what’s most useful and relevant ✅

Having full context across all meetings for a holistic view of trends and patterns ✅

We estimate Granola saves us around 10 hours per week, per person – that’s no small feat. Try it for yourself and get your first month free with code THEDEEPVIEW.

RESEARCH

AI’s surge is widening gaps in trust and policy

The rapid pace of AI development can often feel like endless noise. Stanford University wants to provide some clarity.

On Monday, the university’s center for Human-Centered Artificial Intelligence, or HAI, released its 2026 AI Index report, offering a comprehensive view of some of the most poignant trends impacting the AI industry. Big picture: AI is growing and transforming faster than ever, and no one knows for sure how it’s going to impact our future.

Here are some key takeaways:

AI capability and adoption are growing rapidly, but safety measures can’t keep up. More than 90% of notable frontier models were released in 2025, with several meeting or exceeding human baselines on PhD-level work. Organizational adoption reached 88%, and 80% of university students use generative AI. But reporting on responsible AI benchmarks remains uneven, and “documented AI incidents” rose to 362 in 2025, up from 233 in 2024.

The US leads in some ways and lags in others. The report claims the US-China model gap has effectively closed, with Anthropic’s top model leading China’s by 2.7%. And while the US leads in data center density and AI investment, the country’s ability to attract talent has declined, with the number of AI researchers and developers moving to the US falling by 80% in the last year alone.

Adoption is on the rise, but formal education isn’t. Consumers are seeing substantial value out of AI tools they can access for free, according to the report. Generative AI has reached 53% of the population over the last three years, but is uneven across countries. The US, for instance, sits at a roughly 28% AI adoption rate. Though four out of five students use it in class, only half of middle and high schools have AI policies in place, and only 6% of educators say those policies are clear and concise.

And broadly, the public still isn’t optimistic about how AI will shape the future. While 73% of AI experts have a positive sentiment towards how AI will impact their jobs, only 23% of the public shares that sentiment, the report finds.

Stanford’s research signals that AI is causing all kinds of cracks. There’s a growing divide between public trust and stakeholder optimism, and while adoption is on the rise, usage varies region to region. Meanwhile, hype and industry FOMO are pushing the tech beyond our ability to regulate it, leaving governments and safety organizations alike reeling as they try to keep up. Reports like these offer a snapshot of where AI stands at a given moment, but in an industry that shows no signs of slowing down, these figures could change month to month, week to week or even day to day. Nevertheless, Stanford's moment of clarity in this report is welcome.

LINKS

Sam Altman’s home was targeted in a second attack

Meta reportedly builds an AI version of Zuckerberg to interact with staff

OpenAI internal memo highlights alliance with Amazon

Microsoft exec says company explores “OpenClaw in an enterprise context”

Anthropic may be adding an app-building tool like Lovable to Claude

Apple reportedly works on display-free smart glasses

Meta AI: The app soars in the App Store due to Muse Spark release

Ollama: Ollama 0.20.6 was launched with "improved Gemma 4 tool calling”

NotebookLM: Certain Google for Education plans now have higher limits

ChatGPT: There are more paid plan options, broken down in the post linked

Cursor: Version 3 got updates including agent splitting for multi-tasking

A QUICK POLL BEFORE YOU GO

Do you use an AI browser such as Comet, Atlas, Dia, etc.? |

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

“The water and the reflection look too prim in [the other image].” “No numberplate on the [other] image seemed strange.” “Wheel in the water on [the other image] is unbelievable. ” |

“Trees in the background of [this image] look fuzzy. Number plate blank.” |

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.