- The Deep View

- Posts

- U.S. unveils AI plan, governance debate begins

U.S. unveils AI plan, governance debate begins

Welcome back.

1. U.S. unveils AI plan, governance debate begins

2. Microsoft pushes into top tier of image AI

3. Study: AI reshapes cybersecurity priorities

GOVERNANCE

New U.S. AI framework deepens policy tensions

As AI capabilities grow at an exponential rate, U.S. regulators have proposed a federal framework to regulate the technology and preempt state laws.

On Friday, the White House released its long-awaited National AI Policy Framework, a pitch that aims to declaw state regulatory efforts, with the administration claiming these laws “impose undue burdens” and looking to replace them with “minimally burdensome” regulation.

Though the document claims that a national standard should “respect key principles of federalism” and not undermine states' traditional powers to enforce their own laws, the White House’s framework suggests that states should not be able to regulate AI development at all, as it is an “inherently interstate phenomenon” with national and foreign implications.

The framework also claims that state laws should not be permitted to penalize AI developers for a third party’s unlawful conduct involving their models. According to Politico, the administration will publish an evaluation of existing state AI law that it deems “onerous.”

The most optimistic take in the industry is that it will kickstart some good conversations:

As for federal regulation, the framework also outlined several major risks it aims for new laws to address, including:

Protecting children by requiring “age-assurance tools,” parental account controls and strong protections against sexual and self-harm-related content

Safeguarding and "strengthening American communities” with utility ratepayer limits and stronger law enforcement tools to fight “AI-enabled scams against seniors”

Supporting a “balanced approach” to intellectual property that allows AI to train on copyrighted materials while also strengthening creator rights

Preventing AI from being used to suppress free speech

Creating “regulatory sandboxes” and federal datasets to keep the U.S. at the forefront and “reduce regulatory barriers to innovation”

And creating an “AI-ready workforce” by expanding training programs and AI-related apprenticeships

Michael Kratsios, director of the Office of Science and Technology Policy, said in a post on X that the framework seeks to ensure “every American benefits from AI.”

Though the framework addresses some of the biggest risks that this technology poses, it’s also full of contradictions, Samir Jain, VP of policy at the Center for Democracy and Technology, said in a statement to The Deep View. For instance, the document says that the government should not coerce AI companies to ban content based on partisan or ideological agendas, “yet the Administration’s ‘woke AI’ Executive Order this summer does exactly that,” said Jain.

“The White House’s high-level AI framework contains some sound statements of principles, but its usefulness to lawmakers is limited by its internal contradictions and failure to grapple with key tensions between various approaches to important topics,” Jain said.

Government regulation has long been behind the curve of technology development. AI, however, is moving at a far more exponential pace than any previous tech boom, creating even more urgency. Regulation matters in protecting people from the risks that this tech poses, and so far, states have been on the front lines of those protections. Federal regulation and consensus on how to govern AI may need to happen sooner rather than later, or else leave us open to the risks from the worst possible outcomes. Whether Washington can forge the kind of bipartisan consensus needed to enact broad AI governance that can avoid being whipsawed by partisan shifts in power remains an open question.

TOGETHER WITH BRIGHT DATA

Kernel AI scaled enrichment + agentic research while decreasing failed lookups

If you’re building AI agents, you’ve felt it: web access breaks, retries spike, and coverage quietly drops. Kernel AI used Bright Data to get the scale and reliability needed for enrichment and agentic research with fewer failed lookups, higher throughput, and support for enterprise volumes.

Bright Data supports AI teams across the board by keeping web access reliable across sites and geos, even through anti-bot, dynamic pages, rate limits, and layout changes. Results are delivered as structured JSON/CSV right into your stack (API, cloud storage, or scheduled exports).

Trusted by 70%+ of leading AI labs.

PRODUCTS

Microsoft pushes into top tier of image AI

Microsoft has faced its share of headwinds lately, but it still has plenty of horses in the AI race.

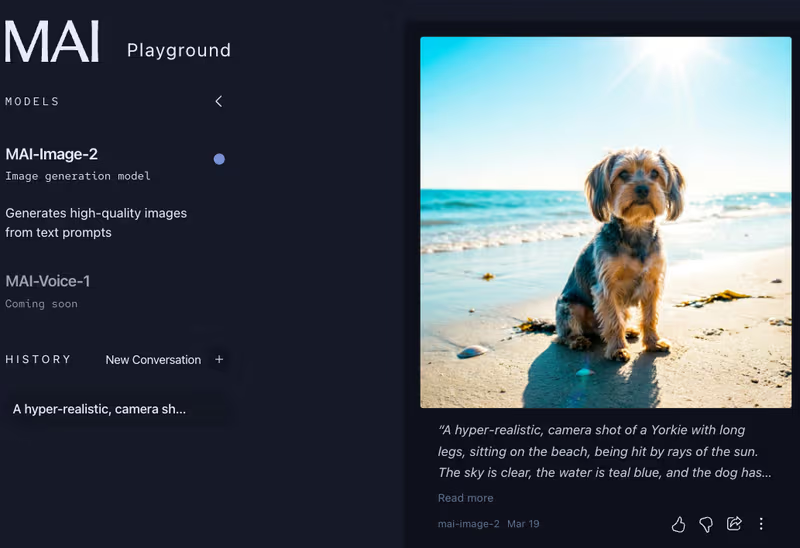

On Thursday, the company launched its next-generation text-to-image model: MAI-Image-2. Microsoft shares that the model was built with feedback from creatives, and the results seem to be paying off already.

The text-to-image model ranked third overall in the Arena.ai text-to-image platform, an open-source project where people anonymously vote for the best models across 16 labs. Microsoft’s model follows Google’s Gemini 3.1 Flash and OpenAI’s GPT-Image-1.5 High Fidelity. It lapped models from xAI, Black Forest Labs, Alibaba, and more.

The rank boost can be attributed to performance updates, which, according to Microsoft, include:

Text: This model can produce text that is both legible and correctly spelled, useful for infographics, slides, posters and more.

Details: Because the most exciting creative work lives in the hyper details, according to Microsoft, this model was built to tackle those details.

Photorealism: Aimed at creatives who want images to feel as realistic as possible, the model can produce natural lights, accurate skin tones, and realistic environments.

Everyone can preview MAI-Image-2 today on the MAI Playground with a Microsoft account. I tried it out myself, typing in a detailed description of my dog and the setting, and was pleased with the results, which looked really similar to my Jimmy-dog.

The full experience is starting to roll out on Copilot and Bing Image Creator. API access is available today for select Microsoft customers, and it will be available in Microsoft Foundry soon.

Microsoft's AI Superintelligence team is deep with talent, and it points to exactly where the company should be directing its energy: building proprietary models capable of competing with the best in the industry. For much of its AI journey, Microsoft has been content to serve as a distribution layer for OpenAI's technology. That was a defensible strategy early on, but OpenAI has since made its own products compelling enough to use directly, cutting out the need for a Microsoft intermediary. The company must now focus on reclaiming a competitive edge, and building better AI is the right place to start.

TOGETHER WITH BRIGHT DATA

70%+ of leading AI labs are already using Bright Data.

Bright Data is the missing piece of your AI infrastructure you can’t scale without: reliable web access for agents and pipelines across sites, geos, and constant change. It keeps access working through anti-bot, dynamic pages, rate limits, and shifting layouts, so your systems can reach the public web when it matters.

Bright Data is used for training pipelines, retrieval/RAG, enrichment, evals, and real-time monitoring and when you need it, structured outputs can be delivered directly into your stack.

Let us know your requirements, and we’ll get you on the fastest path to production.

POLICY

Study: AI reshapes cybersecurity priorities

As AI gets smarter, AI-powered cybersecurity attacks are getting faster and more complex.

On Wednesday, EY published a study finding that, among the 500 senior corporate security leaders surveyed, 96% say AI-enabled cybersecurity attacks are a significant threat to their organizations, and 48% attribute one-quarter of their cybersecurity incidents in the past year to AI.

This perception is rooted in reality, as AI is responsible for creating more sophisticated attacks, as Ganesh Devarajan of EY Americas Consulting Cyber Risk Practice told The Deep View.

“We are seeing a high-stakes crossroads where AI is weaponizing the digital landscape just as it fortifies defenses,” Ganesh Devarajan, EY Americas Consulting Cyber Risk Practice Leader, told The Deep View. “The velocity and sophistication of attacks have scaled in a way that traditional manual defenses struggle to match.”

Despite the imminent threat, the leaders found they were ill-equipped to meet it, with less than half reporting being strongly confident in their organization’s ability to defend against a major security breach enabled by AI. The solution seems to lie in AI: 99% believe that its strategic use will transform their organization’s proactive and defensive cybersecurity strategies.

As a result, companies are leading towards a more aggressive defensive approach, leading to major changes in budgets:

85% of leaders surveyed said their current budget is insufficient to meet the needs of AI-enabled threats

Organizations spending at least a quarter of their cybersecurity budget on AI solutions are going to quintuple in two years

The share will grow from only 9% of the total cybersecurity budget on AI solutions to a whopping 48% of organizational spending during the aforementioned two-year period

Increasing budgets is a sound defense strategy for staying protected because, according to Devarajan, the manual security processes in place cannot keep pace with AI-driven threats. Furthermore, he explains that by shifting capital, companies move from a reactive posture to a more transformative one.

Another way to justify the cost of AI-driven defenses is to examine the cost of AI solutions, as DJ Sampath, SVP, Products AI at Cisco, highlighted. He compared it to buying an expensive piece of tech equipment, which you would want to insure to protect the investment.

“When you're going to go out and introduce AI, or a very expensive thing inside of your system, it's going to touch every single part of your organization,” said Sampath. “You better make sure that you have the right kind of security and safety strategy in place, and if that means spending a little bit more, you’ll want to do that.

Cybersecurity has long been a cat-and-mouse chase: as technology advances, so do the attacks and risks. The rise of generative AI has only exacerbated that cycle, enabling bad actors of all experience levels to create realistic phishing content and elaborate system attacks more easily than ever. As a result, using more sophisticated AI tools to better protect against AI attacks is becoming increasingly important and less optional. Luckily for organizations, this doesn’t require having to build your own LLMs, agents, or AI defenses. Rather, at this point, there are many enterprise SaaS offerings that are easy to deploy and target specific business needs.

The Deep View is written by Nat Rubio-Licht, Sabrina Ortiz, Jason Hiner, Faris Kojok and The Deep View crew. Please reply with any feedback.

Thanks for reading today’s edition of The Deep View! We’ll see you in the next one.

Take The Deep View with you on the go! We’ve got exclusive, in-depth interviews for you on The Deep View: Conversations podcast every Tuesday morning.

If you want to get in front of an audience of 750,000+ developers, business leaders and tech enthusiasts, get in touch with us here.